Diese Seite ist für die vorige Version. Die entsprechende englische Seite wurde in der aktuellen Version entfernt.

Anpassen von Daten mit einem flachen neuronalen Netz

Neuronale Netze eignen sich gut für die Anpassung von Funktionen. Tatsächlich gibt es Beweise dafür, dass ein relativ einfaches neuronales Netz nahezu jede praktische Funktion anpassen kann.

Angenommen, Ihnen liegen Daten aus einer Kurklinik vor. Sie möchten ein Netz entwickeln, das den Prozentsatz des Körperfetts einer Person ausgehend von 13 anatomischen Messwerten vorhersagen kann. Es sind insgesamt 252 Beispielpersonen, für die Ihnen die besagten 13 Datenelemente und die den Personen zugeordneten Körperfettwerte in Prozent vorliegen.

Es gibt zwei Möglichkeiten, dieses Problem zu lösen:

Verwendung der App Neural Net Fitting wie in Anpassen von Daten mithilfe der App „Neural Net Fitting“ beschrieben.

Verwendung von Befehlszeilenfunktionen wie in Anpassen von Daten mithilfe von Befehlszeilenfunktionen beschrieben.

Im Allgemeinen ist es am besten, mit der App zu beginnen und diese anschließend zum automatischen Generieren von Befehlszeilenskripten zu verwenden. Bevor Sie eine der beiden Methoden anwenden, definieren Sie zunächst das Problem, indem Sie einen Datensatz auswählen. Jede der Apps zur Entwicklung neuronaler Netze hat Zugang zu zahlreichen Beispieldatensätzen, die Sie zum Experimentieren mit der Toolbox verwenden können (siehe Beispieldatensätze für flache neuronale Netze). Wenn Sie ein bestimmtes Problem lösen möchten, können Sie auch Ihre eigenen Daten in den Arbeitsbereich laden. Das Datenformat ist im nächsten Abschnitt beschrieben.

Tipp

Zum interaktiven Erstellen und Visualisieren neuronaler Deep-Learning-Netze verwenden Sie die App Deep Network Designer. Weitere Informationen finden Sie unter Erste Schritte mit Deep Network Designer.

Definieren eines Problems

Zur Definition eines Anpassungsproblems (Regressionsproblem) für die Toolbox ordnen Sie eine Menge von Eingangsvektoren (Prädiktoren) als Spalten in einer Matrix an. Stellen Sie anschließend in einer zweiten Matrix eine Menge an Antworten (die richtigen Ausgangsvektoren für die jeweiligen Eingangsvektoren) zusammen. Sie können beispielsweise ein Regressionsproblem mit vier Beobachtungen – jeweils mit zwei Eingangsmerkmalen und einer einzigen Antwort – wie folgt definieren:

predictors = [0 1 0 1; 0 0 1 1]; responses = [0 0 0 1];

Der nächste Abschnitt zeigt, wie ein Netz mithilfe der App Neural Net Fitting für das Anpassen eines Datensatzes trainiert wird. In diesem Beispiel wird ein Beispieldatensatz verwendet, der mit der Toolbox bereitgestellt wird.

Anpassen von Daten mithilfe der App „Neural Net Fitting“

Dieses Beispiel veranschaulicht, wie ein flaches neuronales Netz mithilfe der App Neural Net Fitting für das Anpassen von Daten trainiert werden kann.

Öffnen Sie die App Neural Net Fitting mithilfe des Befehls nftool.

nftool

Auswählen der Daten

Die App Neural Net Fitting enthält Beispieldaten, die Ihnen den Einstieg in das Training eines neuronalen Netzes erleichtern.

Wählen Sie zum Importieren von Beispieldaten für Körperfettwerte Import > Import Body Fat Data Set (Datensatz mit Körperfettwerten importieren) aus. Mit diesem Datensatz können Sie ein neuronales Netz trainieren, das anhand verschiedener Messwerte den Körperfettgehalt einer Person schätzt. Wenn Sie Ihre eigenen Daten aus einer Datei oder aus dem Arbeitsbereich importieren, müssen Sie die Prädiktoren und Antworten angeben und festlegen, ob die Beobachtungen in Zeilen oder Spalten vorliegen.

Informationen über die importierten Daten werden im Fenster Model Summary (Modellzusammenfassung) angezeigt. Dieser Datensatz enthält 252 Beobachtungen mit jeweils 13 Merkmalen. Die Antworten enthalten für jede Beobachtung den Körperfettwert in Prozent.

Unterteilen Sie die Daten in Trainings-, Validierungs- und Testmengen. Behalten Sie die Standardeinstellungen bei. Die Daten sind wie folgt unterteilt:

70 % für das Training.

15 % für die Validierung, um festzustellen, ob das Netz generalisiert, und um das Training zu stoppen, bevor es zu einer Überanpassung kommt.

15 %, um unabhängig zu testen, ob das Netz generalisiert.

Weitere Informationen zur Datenunterteilung finden Sie unter .

Erstellen des Netzes

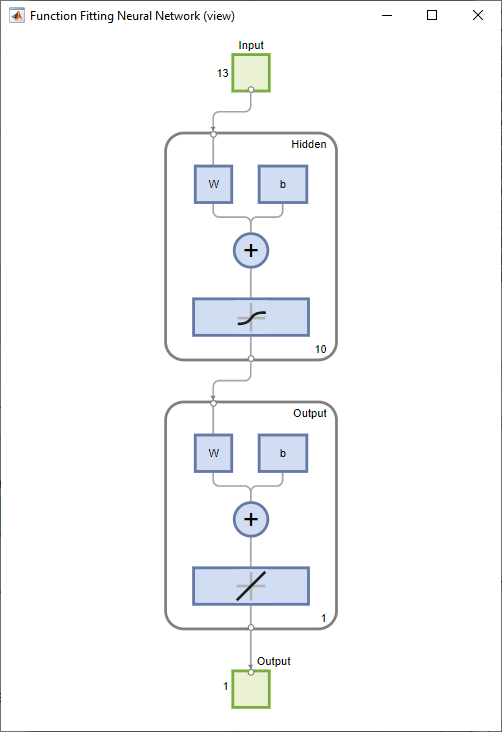

Das Netz ist ein zweischichtiges vorwärtsgerichtetes Netz (auch: Feedforward-Netz) mit einer Sigmoid-Transferfunktion in der verborgenen Schicht und einer linearen Transferfunktion in der Ausgangsschicht. Der Wert für Layer size (Schichtgröße) definiert die Anzahl der verborgenen Neuronen. Behalten Sie die Standardschichtgröße (10) bei. Die Netzarchitektur wird im Fensterbereich Network angezeigt. Das Netzdiagramm wird aktualisiert, um die Eingangsdaten widerzuspiegeln. In diesem Beispiel umfassen die Daten 13 Eingänge (Merkmale) und einen Ausgang.

Trainieren des Netzes

Wählen Sie zum Trainieren des Netzes Train > Train with Levenberg-Marquardt aus. Dies ist der Standard-Trainingsalgorithmus. Er ist identisch mit dem Algorithmus, der beim Klicken auf Train verwendet wird.

Das Training mit „Levenberg-Marquardt“ (trainlm) wird für die meisten Probleme empfohlen. Für rauschbehaftete oder kleine Probleme erzielen Sie mit „Bayesian Regularization“ (trainbr) möglicherweise bessere Ergebnisse, allerdings dauert das Training damit länger. Für große Probleme wird „Scaled Conjugate Gradient“ (trainscg) empfohlen, weil dieser Algorithmus Gradientenberechnungen verwendet, die speichereffizienter sind als die Jacobi-Berechnungen, die von den beiden anderen Algorithmen verwendet werden.

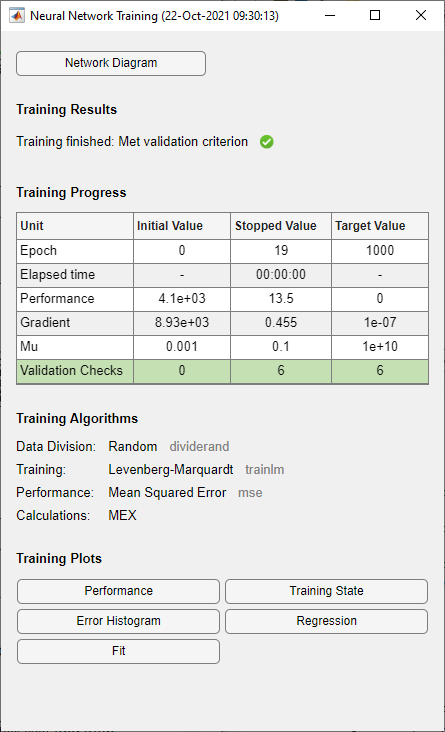

Im Fensterbereich Training wird der Trainingsfortschritt angezeigt. Das Training wird so lange fortgesetzt, bis eines der Stoppkriterien erfüllt ist. In diesem Beispiel wird das Training so lange fortgesetzt, bis der Validierungsfehler größer oder gleich dem zuvor kleinsten Validierungsfehler für sechs aufeinanderfolgende Validierungsiterationen ist („Validierungskriterium erfüllt“).

Analysieren der Ergebnisse

Die Modellzusammenfassung enthält Informationen zum Trainingsalgorithmus und zu den Trainingsergebnissen für die einzelnen Datensätze.

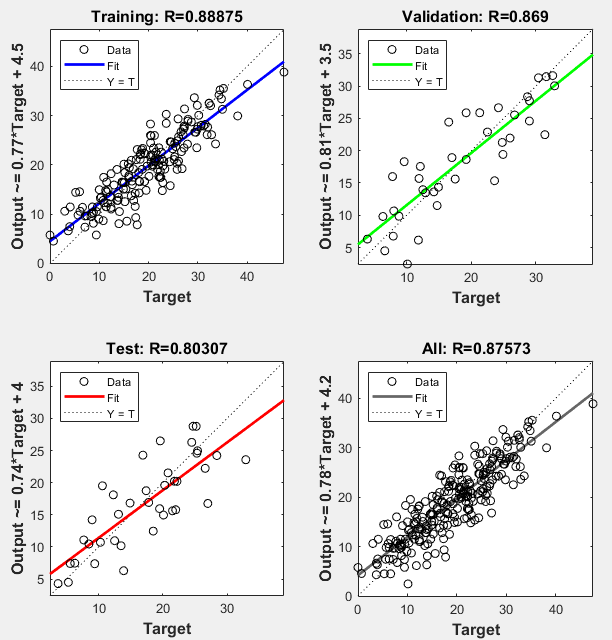

Sie können die Ergebnisse weiter analysieren, indem Sie Diagramme generieren. Zum Darstellen der linearen Regression klicken Sie im Abschnitt Plots (Diagramme) auf Regression. Im Regressionsdiagramm werden die Netzvorhersagen (Ausgang) in Bezug auf die Antworten (Ziel) für die Trainings-, Validierungs- und Testmengen angezeigt.

Für eine perfekte Anpassung sollten die Daten entlang einer Linie von 45 Grad liegen, auf der die Netzausgänge gleich den Antworten sind. Bei diesem Problem ist die Anpassung für alle Datensätze verhältnismäßig gut. Wenn Sie genauere Ergebnisse benötigen, können Sie das Netz durch erneutes Klicken auf Train neu trainieren. Jedes Training wird mit unterschiedlichen Anfangsgewichtungen und -verzerrungen des Netzes ausgeführt und so kann nach einem Neutraining ein verbessertes Netz entstehen.

Anhand des Fehlerhistogramms können Sie die Leistung des Netzes zusätzlich überprüfen. Klicken Sie im Abschnitt Plots (Diagramme) auf Error Histogram (Fehlerhistogramm).

Die blauen Balken stellen Trainingsdaten dar, die grünen Balken Validierungsdaten und die roten Balken Testdaten. Das Histogramm liefert Hinweise auf Ausreißer, d. h. auf Datenpunkte, bei denen die Anpassung deutlich schlechter ist als bei den meisten Daten. Es empfiehlt sich, die Ausreißer zu überprüfen, um festzustellen, ob die Daten schlecht sind oder ob sich diese Datenpunkte vom Rest des Datensatzes unterscheiden. Wenn es sich bei den Ausreißern um gültige Datenpunkte handelt, die sich jedoch vom Rest der Daten unterscheiden, extrapoliert das Netz für diese Punkte. Sie sollten mehr Daten erfassen, die den Ausreißerpunkten ähneln, und das Netz neu trainieren.

Wenn Sie mit der Leistung des Netzes nicht zufrieden sind, können Sie eine der folgenden Möglichkeiten nutzen:

Trainieren Sie das Netz neu.

Erhöhen Sie die Anzahl der verborgenen Neuronen.

Verwenden Sie einen größeren Trainingsdatensatz.

Wenn die Trainingsmenge zu einer guten Leistung führt, die Testmenge jedoch nicht, könnte dies auf eine Überanpassung des Modells hinweisen. Durch eine Verringerung der Anzahl der Neuronen kann die Überanpassung reduziert werden.

Sie können die Leistung des Netzes auch anhand einer zusätzlichen Testmenge beurteilen. Um zusätzliche Testdaten zur Beurteilung des Netzes zu laden, klicken Sie im Abschnitt Test auf Test. In der Modellzusammenfassung werden die zusätzlichen Testergebnisse angezeigt. Sie können auch Diagramme generieren, um die zusätzlichen Testdatenergebnisse zu analysieren.

Generieren von Code

Wählen Sie Generate Code > Generate Simple Training Script (Einfaches Trainingsskript generieren) aus, um MATLAB-Programmcode zu erstellen, mit dem die vorherigen Schritte über die Befehlszeile reproduziert werden können. Das Erstellen von MATLAB-Programmcode kann hilfreich sein, wenn Sie lernen möchten, wie die Befehlszeilenfunktionen der Toolbox zum Anpassen des Trainingsprozesses genutzt werden können. In Anpassen von Daten mithilfe von Befehlszeilenfunktionen werden Sie die generierten Skripte näher untersuchen.

Exportieren des Netzes

Sie können Ihr trainiertes Netz in den Arbeitsbereich oder nach Simulink® exportieren. Sie können das Netz auch mit den Tools von MATLAB Compiler™ und anderen Tools zum Generieren von MATLAB-Programmcode bereitstellen. Wählen Sie zum Exportieren Ihres trainierten Netzes und der Ergebnisse die Optionen Export Model > Export to Workspace (In den Arbeitsbereich exportieren) aus.

Anpassen von Daten mithilfe von Befehlszeilenfunktionen

Die einfachste Möglichkeit, den Umgang mit den Befehlszeilenfunktionen der Toolbox zu erlernen, besteht darin, mithilfe der Apps Skripte zu generieren und diese anschließend abzuändern, um das Netztraining anzupassen. Sehen Sie sich als Beispiel das einfache Skript an, das im vorherigen Abschnitt mithilfe der App Neural Net Fitting erstellt wurde.

% Solve an Input-Output Fitting problem with a Neural Network % Script generated by Neural Fitting app % Created 15-Mar-2021 10:48:13 % % This script assumes these variables are defined: % % bodyfatInputs - input data. % bodyfatTargets - target data. x = bodyfatInputs; t = bodyfatTargets; % Choose a Training Function % For a list of all training functions type: help nntrain % 'trainlm' is usually fastest. % 'trainbr' takes longer but may be better for challenging problems. % 'trainscg' uses less memory. Suitable in low memory situations. trainFcn = 'trainlm'; % Levenberg-Marquardt backpropagation. % Create a Fitting Network hiddenLayerSize = 10; net = fitnet(hiddenLayerSize,trainFcn); % Setup Division of Data for Training, Validation, Testing net.divideParam.trainRatio = 70/100; net.divideParam.valRatio = 15/100; net.divideParam.testRatio = 15/100; % Train the Network [net,tr] = train(net,x,t); % Test the Network y = net(x); e = gsubtract(t,y); performance = perform(net,t,y) % View the Network view(net) % Plots % Uncomment these lines to enable various plots. %figure, plotperform(tr) %figure, plottrainstate(tr) %figure, ploterrhist(e) %figure, plotregression(t,y) %figure, plotfit(net,x,t)

Sie können das Skript speichern und es anschließend über die Befehlszeile ausführen, um die Ergebnisse der vorherigen Trainingssitzung zu reproduzieren. Außerdem können Sie das Skript bearbeiten, um den Trainingsprozess individuell anzupassen. In diesem Fall befolgen Sie die einzelnen Schritte im Skript.

Auswählen der Daten

Im Skript wird davon ausgegangen, dass Prädiktor- und Antwortvektoren bereits in den Arbeitsbereich geladen wurden. Wenn die Daten noch nicht geladen wurden, können Sie sie wie folgt laden:

load bodyfat_datasetMit diesem Befehl werden die Prädiktoren bodyfatInputs und die Antworten bodyfatTargets in den Arbeitsbereich geladen.

Bei diesem Datensatz handelt es sich um einen der Beispieldatensätze, die Teil der Toolbox sind. Informationen zu den verfügbaren Datensätzen finden Sie unter Beispieldatensätze für flache neuronale Netze. Zum Aufrufen einer Liste mit allen verfügbaren Datensätzen geben Sie den Befehl help nndatasets ein. Sie können die Variablen aus jedem dieser Datensätze laden und dabei Ihre eigenen Variablennamen verwenden. Beispielsweise werden mit dem Befehl

[x,t] = bodyfat_dataset;

die Körperfett-Prädiktoren in das Array x und die Körperfett-Antworten in das Array t geladen.

Auswählen des Trainingsalgorithmus

Wählen Sie den Trainingsalgorithmus aus. Das Netz verwendet für das Training den Standard-Levenberg-Marquardt-Algorithmus (trainlm).

trainFcn = 'trainlm'; % Levenberg-Marquardt backpropagation.

Bei Problemen, für die der Levenberg-Marquardt-Algorithmus nicht die gewünschten genauen Ergebnisse liefert, oder bei Problemen mit großen Datenmengen sollten Sie die Trainingsfunktion für das Netz mit einem der folgenden Befehle auf „Bayesian Regularization“ (trainbr) bzw. auf „Scaled Conjugate Gradient“ (trainscg) festlegen:

net.trainFcn = 'trainbr'; net.trainFcn = 'trainscg';

Erstellen des Netzes

Erstellen Sie ein Netz. Das Standardnetz für Funktionsanpassungsprobleme (oder Regressionsprobleme), fitnet, ist ein Feedforward-Netz mit der Tan-Sigmoid-Transferfunktion in der verborgenen Schicht und der linearen Transferfunktion in der Ausgangsschicht. Das Netz weist eine einzelne verborgene Schicht mit zehn Neuronen (Standard) auf. Das Netz hat ein Ausgangsneuron, da jedem Eingangsvektor nur ein Antwortwert zugeordnet ist.

hiddenLayerSize = 10; net = fitnet(hiddenLayerSize,trainFcn);

Hinweis

Mehr Neuronen erfordern mehr Berechnungen und es kann tendenziell zu einer Überanpassung der Daten kommen, wenn die Zahl zu hoch festgelegt wurde. Doch das Netz kann auf diese Weise auch komplexere Probleme lösen. Mehr Schichten erfordern mehr Berechnungen, aber ihre Verwendung kann dazu führen, dass das Netz komplexe Probleme effizienter löst. Um mehr als eine verborgene Schicht zu verwenden, geben Sie die Größen der verborgenen Schichten als Elemente eines Arrays im Befehl fitnet ein.

Unterteilen der Daten

Legen Sie die Unterteilung der Daten fest.

net.divideParam.trainRatio = 70/100; net.divideParam.valRatio = 15/100; net.divideParam.testRatio = 15/100;

Mit diesen Einstellungen werden die Prädiktor- und Antwortvektoren nach dem Zufallsprinzip unterteilt, wobei 70 % für das Training, 15 % für die Validierung und 15 % für das Testen verwendet werden. Weitere Informationen zum Datenunterteilungsprozess finden Sie unter .

Trainieren des Netzes

Trainieren Sie das Netz.

[net,tr] = train(net,x,t);

Während des Trainings wird das Fenster mit dem Trainingsfortschritt geöffnet. Sie können das Training jederzeit unterbrechen, indem Sie auf die Stopp-Schaltfläche ![]() klicken.

klicken.

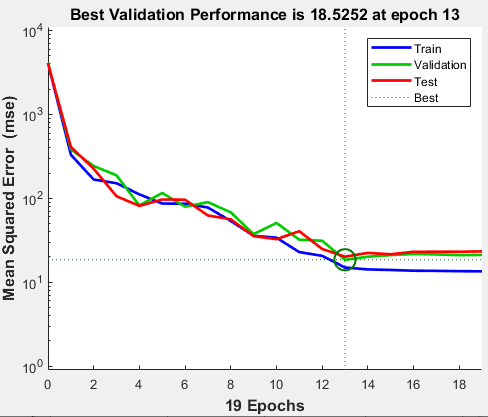

Das Training wurde beendet, wenn der Validierungsfehler größer oder gleich dem zuvor kleinsten Validierungsfehler für sechs aufeinanderfolgende Validierungsiterationen war. Wenn Sie im Trainingsfenster auf Performance (Leistung) klicken, wird ein Diagramm der Trainingsfehler, Validierungsfehler und Testfehler angezeigt (siehe die folgende Abbildung). In diesem Beispiel ist das Ergebnis aufgrund der folgenden Überlegungen angemessen:

Die endgültige mittlere quadratische Abweichung ist gering.

Der Testmengenfehler und der Validierungsmengenfehler haben ähnliche Merkmale.

Bis Epoche 13 (bei der die beste Validierungsleistung zu verzeichnen ist) kam es zu keiner signifikanten Überanpassung.

Testen des Netzes

Testen Sie das Netz. Nachdem das Netz trainiert wurde, können Sie es zur Berechnung der Netzausgänge verwenden. Mit dem folgenden Code werden Netzausgänge, Fehler und Gesamtleistung berechnet.

y = net(x); e = gsubtract(t,y); performance = perform(net,t,y)

performance = 16.2815

Sie können die Leistung des Netzes auch ausschließlich anhand der Testmenge berechnen, indem Sie die Testindizes verwenden, die sich im Trainingsdatensatz befinden. Weitere Informationen finden Sie unter .

tInd = tr.testInd; tstOutputs = net(x(:,tInd)); tstPerform = perform(net,t(tInd),tstOutputs)

tstPerform = 20.1698

Anzeigen des Netzes

Sehen Sie sich das Netzdiagramm an.

view(net)

Analysieren der Ergebnisse

Analysieren Sie die Ergebnisse. Zum Ausführen einer linearen Regression zwischen den Netzvorhersagen (Ausgänge) und den entsprechenden Antworten (Ziele) klicken Sie im Trainingsfenster auf Regression.

Der Ausgang verfolgt die Antworten gut für Trainings-, Test- und Validierungsmengen und der R-Wert für den gesamten Datensatz liegt bei über 0,87. Wenn Sie noch genauere Ergebnisse benötigen, können Sie einen der folgenden Ansätze ausprobieren:

Setzen Sie die ursprünglichen Netzgewichtungen und -verzerrungen mithilfe von

initauf neue Werte und versuchen Sie es erneut.Erhöhen Sie die Anzahl der verborgenen Neuronen.

Verwenden Sie einen größeren Trainingsdatensatz.

Erhöhen Sie die Anzahl der Eingangswerte, wenn relevantere Daten verfügbar sind.

Probieren Sie einen anderen Trainingsalgorithmus aus (siehe ).

In diesem Fall ist die Netzantwort zufriedenstellend und Sie können das Netz jetzt auf neue Daten anwenden.

Weitere Schritte

Wenn Sie mehr Erfahrung mit Befehlszeilenoperationen sammeln möchten, können Sie die folgenden Aufgaben ausprobieren:

Öffnen Sie während des Trainings ein Diagrammfenster (z. B. das Regressionsdiagramm) und beobachten Sie seine Entwicklung.

Erstellen Sie ein Diagramm über die Befehlszeile mit Funktionen wie

plotfit,plotregression,plottrainstateundplotperform.

Weitere Optionen beim Trainieren über die Befehlszeile finden Sie im erweiterten Skript.

Beim Trainieren eines neuronalen Netzes kann sich jedes Mal eine andere Lösung ergeben, da die anfänglichen Gewichtungs- und Verzerrungswerte zufällig sind und die Daten unterschiedlich in Trainings-, Validierungs- und Testmengen unterteilt werden. Daher können verschiedene neuronale Netze, die für das gleiche Problem trainiert wurden, mit ein und demselben Eingang zu unterschiedlichen Ergebnissen führen. Um sicherzustellen, dass ein neuronales Netz mit guter Genauigkeit gefunden wurde, müssen Sie das Netz mehrmals neu trainieren.

Es gibt verschiedene weitere Techniken zur Verbesserung der ursprünglichen Lösungen, wenn eine höhere Genauigkeit erwünscht ist. Weitere Informationen finden Sie unter .

Siehe auch

Neural Net Fitting | Neural Net Time Series | Neural Net Pattern Recognition | Neural Net Clustering | Deep Network Designer | trainlm | fitnet