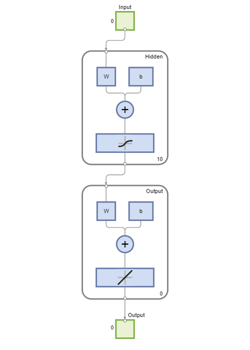

fitnet

Neuronales Netz zur Funktionsanpassung

Beschreibung

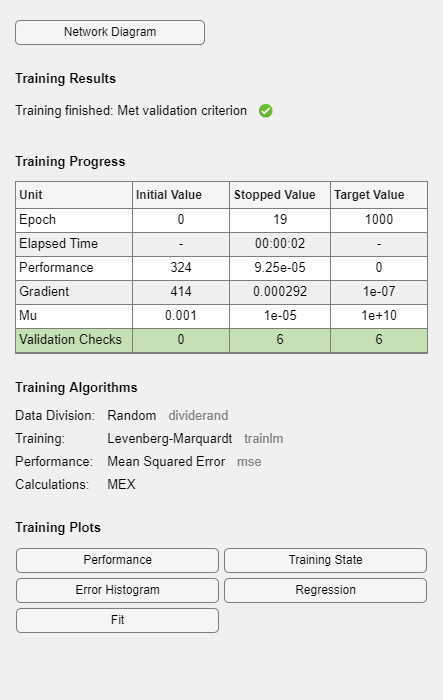

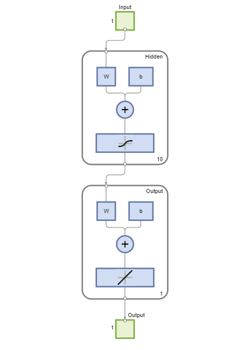

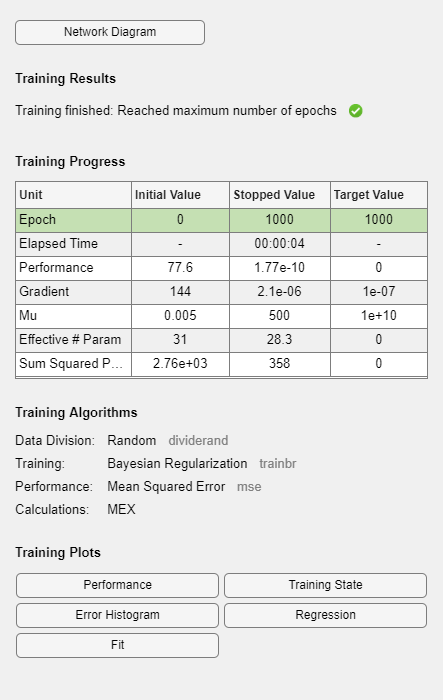

net = fitnet(hiddenSizes)hiddenSizes aus.

net = fitnet(hiddenSizes,trainFcn)hiddenSizes und einer Trainingsfunktion aus, die durch trainFcn angegeben ist.

Beispiele

Eingabeargumente

Ausgangsargumente

Tipps

Bei der Funktionsanpassung wird ein neuronales Netz auf eine Reihe von Eingaben trainiert, um eine zugehörige Reihe von Zielausgaben zu erzeugen. Nachdem Sie das Netz mit den gewünschten verborgenen Schichten und dem Trainingsalgorithmus konstruiert haben, müssen Sie es mit einem Satz von Trainingsdaten trainieren. Sobald das neuronale Netz die Daten angepasst hat, bildet es eine Verallgemeinerung der Input-Output-Beziehung. Sie können dann das trainierte Netz verwenden, um Ausgaben für Eingaben zu erzeugen, für die es nicht trainiert wurde.

Versionsverlauf

Eingeführt in R2010b