Import and Build Deep Neural Networks

Use transfer learning to take advantage of the knowledge provided by a pretrained network to learn new patterns in new data. Fine-tuning a pretrained network with transfer learning is typically much faster and easier than training from scratch. Using pretrained deep networks enables you to quickly create models for new tasks without defining and training a new network, having millions of observations, or having a powerful GPU. Deep Learning Toolbox™ provides several pretrained networks suitable for transfer learning. You can also import networks from external platforms such as TensorFlow™ 2, TensorFlow-Keras, PyTorch®, the ONNX™ (Open Neural Network Exchange) model format, and Caffe.

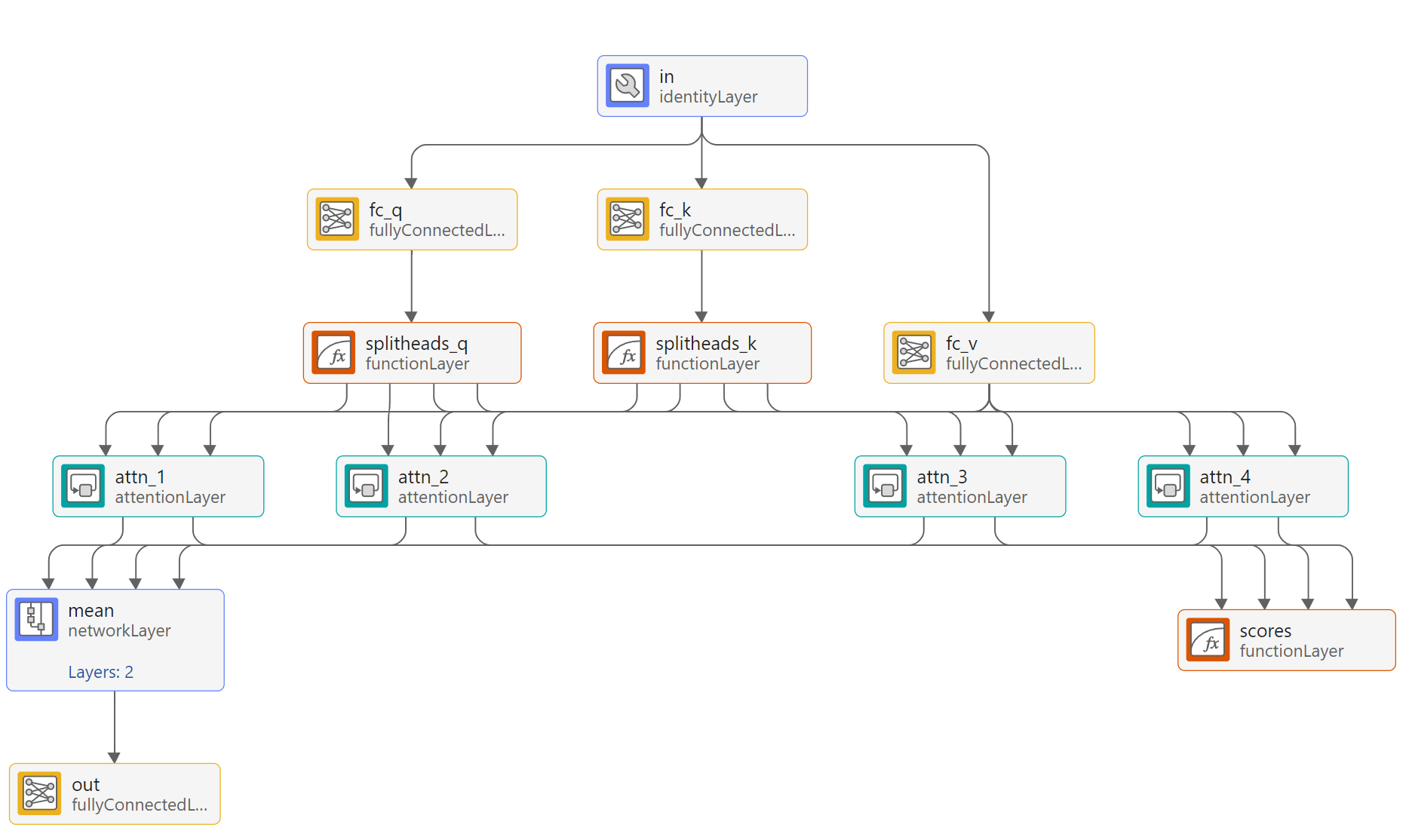

If transfer learning is not suitable for you task, then you can build networks from scratch using MATLAB® code or interactively using the Deep Network Designer app. If the built-in layers do not provide the layer that you need for your task, then you can define your own custom deep learning layer. You can define custom layers with learnable and state parameters. After you define a custom layer, you can check that the layer is valid, GPU compatible, and outputs correctly defined gradients. For models that cannot be specified as networks of layers, you can define the model as a function.

Categories

- Built-In Pretrained Networks

Load built-in pretrained networks and perform transfer learning

- Pretrained Networks from External Platforms

Import pretrained networks from external deep learning platforms

- Deep Network Designer App

Interactively create and edit deep learning networks

- Built-In Layers

Build deep neural networks using built-in layers

- Custom Layers

Define custom layers for deep learning

- Operations

Develop custom deep learning functions