minibatchpredict

Syntax

Description

[

specifies additional options using one or more name-value arguments. For example,

Y1,...,YM] = minibatchpredict(___,Name=Value)MiniBatchSize=32 makes predictions by looping over mini-batches of size

32.

Examples

This example shows how to make predictions using a dlnetwork object by looping over mini-batches.

For large data sets, or when predicting on hardware with limited memory, make predictions by looping over mini-batches of the data using the minibatchpredict function.

Load dlnetwork Object

Load a trained dlnetwork object and the corresponding class names into the workspace. The neural network has one input and two outputs. It takes images of handwritten digits as input, and predicts the digit label and angle of rotation.

load dlnetDigitsLoad Data for Prediction

Load the digits test data for prediction.

load DigitsDataTestView the class names.

classNames

classNames = 10×1 cell

{'0'}

{'1'}

{'2'}

{'3'}

{'4'}

{'5'}

{'6'}

{'7'}

{'8'}

{'9'}

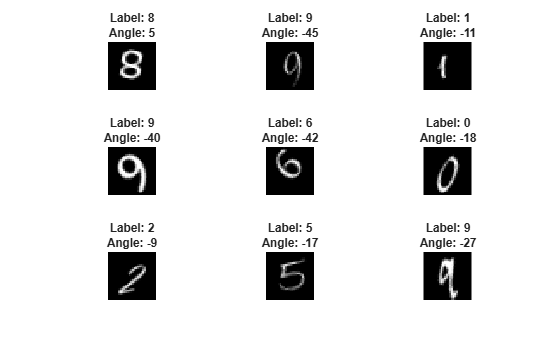

View some of the images and the corresponding labels and angles of rotation.

numObservations = size(XTest,4); numPlots = 9; idx = randperm(numObservations,numPlots); figure for i = 1:numPlots nexttile(i) I = XTest(:,:,:,idx(i)); label = labelsTest(idx(i)); imshow(I) title("Label: " + string(label) + newline + "Angle: " + anglesTest(idx(i))) end

Make Predictions

Make predictions using the minibatchpredict function, and convert the classification scores to labels using the scores2label function. By default, the minibatchpredict function uses a GPU if one is available. Using a GPU requires a Parallel Computing Toolbox™ license and a supported GPU device. For information on supported devices, see GPU Computing Requirements (Parallel Computing Toolbox). Otherwise, the function uses the CPU. To select the execution environment manually, use the ExecutionEnvironment argument of the minibatchpredict function.

[scoresTest,Y2Test] = minibatchpredict(net,XTest); Y1Test = scores2label(scoresTest,classNames);

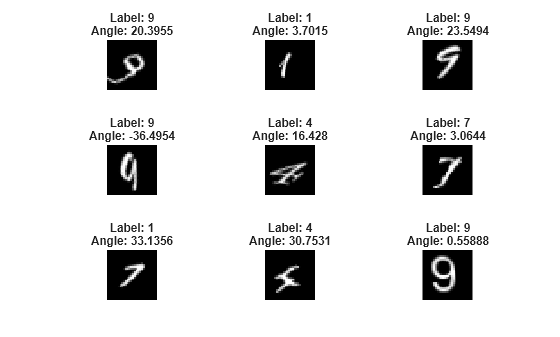

Visualize some of the predictions.

idx = randperm(numObservations,numPlots); figure for i = 1:numPlots nexttile(i) I = XTest(:,:,:,idx(i)); label = Y1Test(idx(i)); imshow(I) title("Label: " + string(label) + newline + "Angle: " + Y2Test(idx(i))) end

Input Arguments

Neural network, specified as a dlnetwork object.

Image data, specified as a numeric array, categorical array,

dlarray object, datastore, or minibatchqueue

object.

Tip

For sequences of images, such as video data, use the

sequences input argument, instead.

If you have data that fits in memory that does not require additional processing, then specifying the input data as a numeric array is usually the easiest option. If you want to make predictions with image files stored on your system, or want to apply additional processing, then datastores are usually the easiest option.

Tip

Neural networks expect input data with a specific layout. For example, image classification networks typically expect image representations to be h-by-w-by-c numeric arrays, where h, w, and c are the height, width, and number of channels of the images, respectively. Neural networks typically have an input layer that specifies the expected layout of the data.

Most datastores and functions return data in the layout that the network expects. If your data

is in a different layout, then indicate the layout by using the InputDataFormats name-value argument or by specifying the input

data as a formatted dlarray object. Specifying the

InputDataFormats name-value argument is usually easier

than adjusting the layout of the input data manually.

For neural networks that do not have input layers, you must use the

InputDataFormats name-value argument or formatted

dlarray objects.

For more information, see Deep Learning Data Formats.

Numeric Array or dlarray Object

For data that fits in memory and does not require additional processing, you can

specify a data set of images as a numeric array or a dlarray

object.

The layouts of numeric arrays and unformatted dlarray objects

depend on the type of image data, and must be consistent with the

InputDataFormats argument.

Most networks expect image data in these layouts.

| Data | Layout |

|---|---|

| 2-D images | h-by-w-by-c-by-N array, where h, w, and c are the height, width, and number of channels of the images, respectively, and N is the number of images. Data in this layout has the data

format |

| 3-D images | h-by-w-by-d-by-c-by-N array, where h, w, d, and c are the height, width, depth, and number of channels of the images, respectively, and N is the number of images. Data

in this layout has the data format |

For data in a different layout, indicate that your data has a different layout by

using the InputDataFormats argument or use a formatted

dlarray object instead. For more information, see Deep Learning Data Formats.

Categorical Array (since R2025a)

For images of categorical values (such as labeled pixel maps) that fit in memory and does not require additional processing, you can specify the images as categorical arrays.

The software automatically converts categorical inputs to numeric values and

passes them to the neural network. To specify how the software converts categorical

inputs to numeric values, use the CategoricalInputEncoding argument. The layout of categorical arrays

depend on the type of image data and must be consistent with the InputDataFormats.

Most networks expect categorical image data passed to the

minibatchpredict function in the layouts in this

table.

| Data | Layout |

|---|---|

| 2-D categorical images | h-by-w-by-1-by-N array, where h and w are the height and width of the images, respectively, and N is the number of images. After the software converts this data to

numeric arrays, data in this layout has the data format

|

| 3-D categorical images | h-by-w-by-d-by-1-by-N array, where h, w, and d are the height, width, and depth of the images, respectively, and N is the number of images. Data in this layout has the data format

|

For data in a different layout, indicate that your data has a different layout by

using the InputDataFormats argument or use a formatted dlarray

object instead. For more information, see Deep Learning Data Formats.

Datastore

Datastores read batches of images. Use datastores when you have data that does not fit in memory, or when you want to apply transformations to the data.

For image data, the minibatchpredict function supports these

datastores:

| Datastore | Description | Example Usage |

|---|---|---|

ImageDatastore | Datastore of images saved on disk. | Make predictions with images saved on your system, where the

images are the same size. When the images are different sizes, use an

|

augmentedImageDatastore | Datastore that applies random affine geometric transformations, including resizing. | Make predictions with images saved on disk, where the images are different sizes. When you make predictions using an augmented image datastore, do not apply additional augmentations such as rotation, reflection, shear, and translation. |

TransformedDatastore | Datastore that transforms batches of data read from an underlying datastore using a custom transformation function. |

|

CombinedDatastore | Datastore that reads from two or more underlying datastores. | Make predictions using a network with multiple inputs. |

| Custom mini-batch datastore | Custom datastore that returns mini-batches of data. | Make predictions using data in a layout that other datastores do not support. For details, see Develop Custom Mini-Batch Datastore. |

Tip

ImageDatastore objects allow batch reading of JPG or PNG image files

using prefetching. For efficient preprocessing of images for deep learning, including image

resizing, use an augmentedImageDatastore object. Do not use the ReadFcn

property of ImageDatastore objects. If you set the ReadFcn

property to a custom function, then the ImageDatastore object does not

prefetch image files and is usually significantly slower.

You can use other built-in datastores for testing deep learning neural networks by using the transform and combine functions. These functions can convert the data read from datastores to the layout required by the minibatchpredict function. The required layout of the datastore output depends on the neural network architecture. For more information, see Datastore Customization.

minibatchqueue Object

For greater control over how the software processes and transforms mini-batches, you can

specify data as a minibatchqueue

object. When you do, the minibatchpredict function ignores the

MiniBatchSize property of the object and uses the MiniBatchSize

name-value argument instead. For minibatchqueue input, the

PreprocessingEnvironment property must be

"serial".

Note

This argument supports complex-valued predictors.

Sequence or time series data, specified as a numeric array, categorical array,

dlarray object, cell array, datastore, or

minibatchqueue object.

If you have sequences of the same length that fit in memory and do not require additional processing, then specifying the input data as a numeric array is usually the easiest option. If you have sequences of different lengths that fit in memory and do not require additional processing, then specifying the input data as a cell array of numeric arrays is usually the easiest option. If you want to train with sequences stored on your system, or want to apply additional processing such as custom transformations, then datastores are usually the easiest option.

Tip

Neural networks expect input data with a specific layout. For example, vector-sequence classification networks typically expect vector-sequence representations to be t-by-c arrays, where t and c are the number of time steps and channels of sequences, respectively. Neural networks typically have an input layer that specifies the expected layout of the data.

Most datastores and functions return data in the layout that the network expects. If your data

is in a different layout, then indicate the layout by using the InputDataFormats name-value argument or by specifying the input

data as a formatted dlarray object. Specifying the

InputDataFormats name-value argument is usually easier

than adjusting the layout of the input data manually.

For neural networks that do not have input layers, you must use the

InputDataFormats name-value argument or formatted

dlarray objects.

For more information, see Deep Learning Data Formats.

Numeric Array, Categorical Array, dlarray Object, or Cell Array

For data that fits in memory and does not require additional processing like

custom transformations, you can specify a single sequence as a numeric array,

categorical array, or a dlarray object, or a data set of sequences as

a cell array of numeric arrays, categorical arrays, or dlarray

objects.

For cell array input, the cell array must be an

N-by-1 cell array of numeric arrays, categorical arrays, or

dlarray objects, where N is the number of

observations.

The software automatically converts categorical

inputs to numeric values and passes them to the neural network. To specify how the

software converts categorical inputs to numeric values, use the CategoricalInputEncoding argument.

The size and shape of the numeric arrays, categorical arrays, or

dlarray objects that represent sequences depend on the type of

sequence data and must be consistent with the InputDataFormats

argument.

Most networks with a sequence input layer expect sequence data passed to the

minibatchpredict function in the layouts in this table.

| Data | Layout |

|---|---|

| Vector sequences | s-by-c matrices, where s and c are the numbers of time steps and channels (features) of the sequences, respectively. |

| Categorical vector sequences | s-by-1 categorical arrays, where s is the number of time steps of the sequences. |

| 1-D image sequences | h-by-c-by-s arrays, where h and c correspond to the height and number of channels of the images, respectively, and s is the sequence length. |

| Categorical 1-D image sequences | h-by-1-by-s categorical arrays, where h corresponds to the height of the images and s is the sequence length. |

| 2-D image sequences | h-by-w-by-c-by-s arrays, where h, w, and c correspond to the height, width, and number of channels of the images, respectively, and s is the sequence length. |

| Categorical 2-D image sequences | h-by-w-by-1-by-s arrays, where h and w correspond to the height and width of the images, respectively, and s is the sequence length. |

| 3-D image sequences | h-by-w-by-d-by-c-by-s, where h, w, d, and c correspond to the height, width, depth, and number of channels of the 3-D images, respectively, and s is the sequence length. |

| Categorical 3-D image sequences | h-by-w-by-d-by-1-by-s, where h, w, and d correspond to the height, width, and depth of the 3-D images, respectively, and s is the sequence length. |

For data in a different layout, indicate that your data has a different layout by

using the InputDataFormats argument or use a formatted

dlarray object instead. For more information, see Deep Learning Data Formats.

Datastore

Datastores read batches of sequences. Use datastores when you have data that does not fit in memory, or when you want to apply transformations to the data.

For sequence and time-series data, the minibatchpredict

function supports these datastores:

| Datastore | Description | Example Usage |

|---|---|---|

TransformedDatastore | Datastore that transforms batches of data read from an underlying datastore using a custom transformation function. |

|

CombinedDatastore | Datastore that reads from two or more underlying datastores. | Make predictions using a network with multiple inputs. |

| Custom mini-batch datastore | Custom datastore that returns mini-batches of data. | Train neural network using data in a layout that other datastores do not support. For details, see Develop Custom Mini-Batch Datastore. |

You can use other built-in datastores by using the transform and

combine

functions. These functions can convert the data read from datastores to the layout required

by the minibatchpredict function. For example, you can transform and combine

data read from in-memory arrays and CSV files using ArrayDatastore and

TabularTextDatastore objects, respectively. The required layout of the

datastore output depends on the neural network architecture. For more information, see Datastore Customization.

minibatchqueue Object

For greater control over how the software processes and transforms mini-batches, you can

specify data as a minibatchqueue

object. When you do, the minibatchpredict function ignores the

MiniBatchSize property of the object and uses the MiniBatchSize

name-value argument instead. For minibatchqueue input, the

PreprocessingEnvironment property must be

"serial".

Note

This argument supports complex-valued predictors.

Feature or tabular data, specified as a numeric array, categorical array, datastore,

table, or minibatchqueue object.

If you have data that fits in memory that does not require additional processing, then specifying the input data as a numeric array or table is usually the easiest option. If you want to train with feature or tabular data stored on your system, or want to apply additional processing such as custom transformations, then datastores are usually the easiest option.

Tip

Neural networks expect input data with a specific layout. For example feature classification networks typically expect feature and tabular data representations to be 1-by-c vectors, where c is the number of features of the data. Neural networks typically have an input layer that specifies the expected layout of the data.

Most datastores and functions return data in the layout that the network expects. If your data

is in a different layout, then indicate the layout by using the InputDataFormats name-value argument or by specifying the input

data as a formatted dlarray object. Specifying the

InputDataFormats name-value argument is usually easier

than adjusting the layout of the input data manually.

For neural networks that do not have input layers, you must use the

InputDataFormats name-value argument or formatted

dlarray objects.

For more information, see Deep Learning Data Formats.

Numeric Array or dlarray Objects

For feature data that fits in memory and does not require additional processing

like custom transformations, you can specify feature data as a numeric array or

dlarray object.

The layouts of numeric arrays and unformatted dlarray objects

must be consistent with the InputDataFormats argument. Most

networks with feature input expect input data specified as an

numObservations-by-numFeatures array, where

numObservations is the number of observations and

numFeatures is the number of features of the input data.

Categorical Array (since R2025a)

For discrete features that fit in memory and does not require additional processing like custom transformations, you can specify the feature data as a categorical array.

The software automatically converts categorical inputs to numeric values and

passes them to the neural network. To specify how the software converts categorical

inputs to numeric values, use the CategoricalInputEncoding argument. The layout of categorical arrays must

be consistent with the InputDataFormats argument.

Most networks with categorical feature input expect input data specified as a

N-by-1 vector, where N is the number of

observations. After the software converts this data to numeric arrays, data in this

layout has the data format "BC" (batch, channel). The size of the

"C" (channel) dimension depends on the CategoricalInputEncoding argument.

For data in a different layout, indicate that your data has a different layout by

using the InputDataFormats training option or use a formatted

dlarray object instead. For more information, see Deep Learning Data Formats.

Table

For feature data that fits in memory and does not require additional processing like custom transformations, you can specify feature data as a table.

To specify feature data as a table, specify a table with

numObservations rows and numFeatures+1

columns, where numObservations and numFeatures

are the number of observations and channels of the input data, respectively. The

minibatchpredict function uses the first

numFeatures columns as the input features.

Datastore

Datastores read batches of feature data. Use datastores when you have data that does not fit in memory, or when you want to apply transformations to the data.

For feature and tabular data, the minibatchpredict function

supports these datastores:

| Data Type | Description | Example Usage |

|---|---|---|

TransformedDatastore | Datastore that transforms batches of data read from an underlying datastore using a custom transformation function. |

|

CombinedDatastore | Datastore that reads from two or more underlying datastores. | Make predictions using a neural network with multiple inputs. |

| Custom mini-batch datastore | Custom datastore that returns mini-batches of data. | Make predictions using data in a layout that other datastores do not support. For details, see Develop Custom Mini-Batch Datastore. |

You can use other built-in datastores for making predictions by using the transform and

combine

functions. These functions can convert the data read from datastores to the table or cell

array format required by the minibatchpredict function. For more

information, see Datastore Customization.

minibatchqueue Object

For greater control over how the software processes and transforms mini-batches, you can

specify data as a minibatchqueue

object. When you do, the minibatchpredict function ignores the

MiniBatchSize property of the object and uses the MiniBatchSize

name-value argument instead. For minibatchqueue input, the

PreprocessingEnvironment property must be

"serial".

Note

This argument supports complex-valued predictors.

Generic data or combinations of data types, specified as a numeric array,

categorical array, dlarray object, datastore, or

minibatchqueue object.

If you have data that fits in memory and does not require additional processing, then specifying the input data as a numeric array is usually easiest option. If you want to train with data stored on your system, or you want to apply additional processing, then using datastores it is usually easiest option.

Tip

Neural networks expect input data with a specific layout. For example, vector-sequence classification networks typically expect vector-sequence representations to be t-by-c arrays, where t and c are the number of time steps and channels of sequences, respectively. Neural networks typically have an input layer that specifies the expected layout of the data.

Most datastores and functions return data in the layout that the network expects. If your data

is in a different layout, then indicate the layout by using the InputDataFormats name-value argument or by specifying the input

data as a formatted dlarray object. Specifying the

InputDataFormats name-value argument is usually easier

than adjusting the layout of the input data manually.

For neural networks that do not have input layers, you must use the

InputDataFormats name-value argument or formatted

dlarray objects.

For more information, see Deep Learning Data Formats.

Numeric Arrays, Categorical Arrays, or dlarray Objects

For data that fits in memory and does not require additional processing like

custom transformations, you can specify data as a numeric array, categorical array, or

a dlarray object.

For a neural network with an inputLayer object, the expected

layout of input data is a given by the InputFormat property of the

layer.

The software automatically converts categorical inputs to numeric values and

passes them to the neural network. To specify how the software converts categorical

inputs to numeric values, use the CategoricalInputEncoding argument. The layout of categorical arrays must

be consistent with the InputDataFormats argument.

For data in a different layout, indicate that your data has a different layout by

using the InputDataFormats argument or use a formatted dlarray

object instead. For more information, see Deep Learning Data Formats.

Datastores

Datastores read batches of data. Use datastores when you have data that does not fit in memory, or when you want to apply transformations to the data.

Generic data or combinations of data types, the

minibatchpredict function supports these datastores:

| Data Type | Description | Example Usage |

|---|---|---|

TransformedDatastore | Datastore that transforms batches of data read from an underlying datastore using a custom transformation function. |

|

CombinedDatastore | Datastore that reads from two or more underlying datastores. | Make predictions using a neural network with multiple inputs. |

| Custom mini-batch datastore | Custom datastore that returns mini-batches of data. | Make predictions using data in a format that other datastores do not support. For details, see Develop Custom Mini-Batch Datastore. |

You can use other built-in datastores by using the transform and

combine

functions. These functions can convert the data read from datastores to the table or cell

array format required by minibatchpredict. For more information, see Datastore Customization.

minibatchqueue Object

For greater control over how the software processes and transforms mini-batches, you can

specify data as a minibatchqueue

object. When you do, the minibatchpredict function ignores the

MiniBatchSize property of the object and uses the MiniBatchSize

name-value argument instead. For minibatchqueue input, the

PreprocessingEnvironment property must be

"serial".

Note

This argument supports complex-valued predictors.

In-memory data for a neural network with multiple inputs, specified as numeric

arrays, categorical arrays, dlarray objects, tables, or cell

arrays.

For a neural network with multiple inputs, if you have data that fits in memory and does not require additional processing, then specifying the input data as in-memory arrays is usually the easiest option. If you want to make predictions with data stored on your system, or you want to apply additional processing, then using datastores is usually the easiest option.

Tip

Neural networks expect input data with a specific layout. For example, vector-sequence classification networks typically expect vector-sequence representations to be t-by-c arrays, where t and c are the number of time steps and channels of sequences, respectively. Neural networks typically have an input layer that specifies the expected layout of the data.

Most datastores and functions return data in the layout that the network expects. If your data

is in a different layout, then indicate the layout by using the InputDataFormats name-value argument or by specifying the input

data as a formatted dlarray object. Specifying the

InputDataFormats name-value argument is usually easier

than adjusting the layout of the input data manually.

For neural networks that do not have input layers, you must use the

InputDataFormats name-value argument or formatted

dlarray objects.

For more information, see Deep Learning Data Formats.

For each input X1,...,XN, where N is the

number of inputs, specify the data as a numeric array, dlarray object,

table, or cell array. The layout of each input must match one of the layouts described

by the images, sequences,

features, or data arguments. The input

Xi corresponds to the network input

net.InputNames(i).

Note

This argument supports complex-valued predictors.

Name-Value Arguments

Specify optional pairs of arguments as

Name1=Value1,...,NameN=ValueN, where Name is

the argument name and Value is the corresponding value.

Name-value arguments must appear after other arguments, but the order of the

pairs does not matter.

Example: minibatchpredict(net,images,MiniBatchSize=32) makes

predictions by looping over images using mini-batches of size

32.

Size of mini-batches to use for prediction, specified as a positive integer. Larger mini-batch sizes require more memory, but can lead to faster predictions.

When you make predictions with sequences of different lengths,

the mini-batch size can affect the amount of padding added to the input data, which can result

in different predicted values. Try using different values to see which works best with your

network. To specify padding options, use the SequenceLength name-value argument.

Note

If you specify the input data as a minibatchqueue object, then

the minibatchpredict function uses the mini-batch size specified by

this argument and not the MiniBatchSize property of the

minibatchqueue object.

Data Types: single | double | int8 | int16 | int32 | int64 | uint8 | uint16 | uint32 | uint64

Layers to extract outputs from, specified as a string array or a cell array of character vectors containing the layer names.

If

Outputs(i)corresponds to a layer with a single output, thenOutputs(i)is the name of the layer.If

Outputs(i)corresponds to a layer with multiple outputs, thenOutputs(i)is the layer name followed by the/character and the name of the layer output:"layerName/outputName".

To use the outputs of layers inside a networkLayer

object, first expand the nested network using the expandLayers function. For more information, see Network Layer

Tips.

The default value is net.OutputNames.

Performance optimization, specified as one of these values:

"auto"— Automatically apply a number of optimizations suitable for the input network and hardware resources."mex"— Compile and execute a MEX function. This option is available only when using a GPU. You must store the input data or the network learnable parameters asgpuArrayobjects. Using a GPU requires Parallel Computing Toolbox™ and a supported GPU device. For information on supported devices, see GPU Computing Requirements (Parallel Computing Toolbox). If Parallel Computing Toolbox or a suitable GPU is not available, then the software returns an error."none"— Disable all acceleration.

When you use the "auto" or "mex" option, the software

can offer performance benefits at the expense of an increased initial run time. Subsequent

calls to the function are typically faster. Use performance optimization when you call the

function multiple times using different input data.

When Acceleration is "mex", the software generates and

executes a MEX function based on the model and parameters you specify in the function call.

A single model can have several associated MEX functions at one time. Clearing the model

variable also clears any MEX functions associated with that model.

When Acceleration is

"auto", the software does not generate a MEX function.

The "mex" option is available only when you use a GPU. You must have a

C/C++ compiler installed and the GPU Coder™ Interface for Deep Learning support package. Install the support package using the Add-On Explorer in

MATLAB®. For setup instructions, see Set Up Compiler (GPU Coder). GPU Coder is not required.

The "mex" option has these limitations:

Only the

singleprecision data type is supported. The input data or the network learnable parameters must have the underlying data typesingle.Networks with inputs that are not connected to an input layer are not supported.

Traced

dlarrayobjects are not supported. This means that the"mex"option is not supported inside a call to thedlfevalfunction.Not all layers are supported. For a list of supported layers, see Supported Layers (GPU Coder).

MATLAB Compiler™ does not support deploying your network when using the

"mex"option.

For quantized networks, the "mex" option requires a CUDA® enabled NVIDIA® GPU with compute capability 6.1, 6.3, or higher.

Hardware resource, specified as one of these values:

"auto"— Use a GPU if one is available. Otherwise, use the CPU. Ifnetis a quantized network with theTargetLibraryproperty set to"none", use the CPU even if a GPU is available."gpu"— Use the GPU. Using a GPU requires a Parallel Computing Toolbox license and a supported GPU device. For information about supported devices, see GPU Computing Requirements (Parallel Computing Toolbox). If Parallel Computing Toolbox or a suitable GPU is not available, then the software returns an error."cpu"— Use the CPU.

Option to pad or truncate the input sequences, specified as one of these options:

"longest"— Pad sequences to have the same length as the longest sequence. This option does not discard any data, although padding can introduce noise to the neural network."shortest"— Truncate sequences to have the same length as the shortest sequence. This option ensures that the function does not add padding at the cost of discarding data.

To learn more about the effects of padding and truncating input sequences, see Sequence Padding and Truncation.

Direction of padding or truncation, specified as one of these options:

"right"— Pad or truncate sequences on the right. The sequences start at the same time step and the software truncates or adds padding to the end of each sequence."left"— Pad or truncate sequences on the left. The software truncates or adds padding to the start of each sequence so that the sequences end at the same time step.

Recurrent layers process sequence data one time step at a time, so when the recurrent layer

OutputMode property is "last", any padding in the

final time steps can negatively influence the layer output. To pad or truncate sequence data

on the left, set the SequencePaddingDirection name-value argument to

"left".

For sequence-to-sequence neural networks (when the OutputMode property is

"sequence" for each recurrent layer), any padding in the first time

steps can negatively influence the predictions for the earlier time steps. To pad or

truncate sequence data on the right, set the SequencePaddingDirection name-value argument to "right".

To learn more about the effects of padding and truncating sequences, see Sequence Padding and Truncation.

Value for padding the input sequences, specified as a scalar.

Do not pad sequences with NaN, because doing so can

propagate errors through the neural network.

Data Types: single | double | int8 | int16 | int32 | int64 | uint8 | uint16 | uint32 | uint64

Since R2025a

Encoding of categorical inputs, specified as one of these values:

"integer"— Convert categorical inputs to their integer value. In this case, the network must have one input channel for each of the categorical inputs."one-hot"— Convert categorical inputs to one-hot encoded vectors. In this case, the network must havenumCategorieschannels for each of the categorical inputs, wherenumCategoriesis the number of categories of the corresponding categorical input.

Description of the input data dimensions, specified as a string array, character vector, or cell array of character vectors.

If InputDataFormats is "auto", then the software uses

the formats expected by the network input. Otherwise, the software uses the specified

formats for the corresponding network input.

A data format is a string of characters, where each character describes the type of the corresponding data dimension.

The characters are:

"S"— Spatial"C"— Channel"B"— Batch"T"— Time"U"— Unspecified

For example, consider an array that represents a batch of sequences where the first,

second, and third dimensions correspond to channels, observations, and time steps,

respectively. You can describe the data as having the format "CBT"

(channel, batch, time).

You can specify multiple dimensions labeled "S" or "U".

You can use the labels "C", "B", and

"T" once each, at most. The software ignores singleton trailing

"U" dimensions after the second dimension.

For a neural network with multiple inputs net, specify an array of

input data formats, where InputDataFormats(i) corresponds to the

input net.InputNames(i).

For more information, see Deep Learning Data Formats.

Data Types: char | string | cell

Description of the output data dimensions, specified as one of these values:

"auto"— If the output data has the same number of dimensions as the input data, then theminibatchpredictfunction uses the format specified byInputDataFormats. If the output data has a different number of dimensions than the input data, then theminibatchpredictfunction automatically permutes the dimensions of the output data so that they are consistent with the network input layers or theInputDataFormatsvalue.String, character vector, or cell array of character vectors — The

minibatchpredictfunction uses the specified data formats.

A data format is a string of characters, where each character describes the type of the corresponding data dimension.

The characters are:

"S"— Spatial"C"— Channel"B"— Batch"T"— Time"U"— Unspecified

For example, consider an array that represents a batch of sequences where the first,

second, and third dimensions correspond to channels, observations, and time steps,

respectively. You can describe the data as having the format "CBT"

(channel, batch, time).

You can specify multiple dimensions labeled "S" or "U".

You can use the labels "C", "B", and

"T" once each, at most. The software ignores singleton trailing

"U" dimensions after the second dimension.

For more information, see Deep Learning Data Formats.

Data Types: char | string | cell

Flag to return padded data as a uniform array, specified as a logical

1 (true) or 0

(false). When you set the value to 0, the

software outputs a cell array of predictions.

Output Arguments

Neural network predictions, returned as numeric arrays, dlarray

objects, or cell arrays Y1,...,YM, where M is the

number of network outputs.

The predictions Yi correspond to the output

Outputs(i).

For a classification neural network, the elements of the output correspond to the scores for

each class. The order of the scores matches the order of the categories in the training

data. For example, if you train the neural network using the categorical labels

TTrain, then the order of the scores matches the order of the

categories given by categories(TTrain).

More About

The minibatchpredict function converts integer numeric array and

datastore inputs to single precision floating point values. For

minibatchqueue inputs, the software uses the data type specified by the

OutputCast property of that input.

When you use prediction or validation functions with a dlnetwork object with single-precision learnable and state parameters, the software performs the computations using single-precision, floating-point arithmetic.

When you use prediction or validation functions with a dlnetwork object with double-precision learnable and state parameters:

If the input data is single precision, the software performs the computations using single-precision, floating-point arithmetic.

If the input data is double precision, the software performs the computations using double-precision, floating-point arithmetic.

To provide the best performance, deep learning using a GPU in

MATLAB is not guaranteed to be deterministic. Depending on your network architecture,

under some conditions you might get different results when using a GPU to train two identical

networks or make two predictions using the same network and data. If you require determinism

when performing deep learning operations using a GPU, use the deep.gpu.deterministicAlgorithms function (since R2024b).

If you use the rng function to set the same random number generator and seed, then predictions

made using the CPU are reproducible.

Tips

To make predictions in parallel using multiple GPUs, create a parallel pool with one worker per GPU, divide up your data, and make the predictions in parallel. For an example showing how to make predictions using multiple GPUs, see Train Network Using Automatic Multi-GPU Support.

Extended Capabilities

The minibatchpredict function fully supports GPU acceleration.

By default, minibatchpredict uses a GPU if one is available. If

net is a quantized network with the TargetLibrary

property set to "none", minibatchpredict uses the CPU

even if a GPU is available. You can specify the hardware that the

minibatchpredict function uses by specifying the ExecutionEnvironment name-value argument.

For more information, see Run MATLAB Functions on a GPU (Parallel Computing Toolbox).

Version History

Introduced in R2024aTo specify how to convert categorical inputs to numeric values for making predictions

with a neural network, use the CategoricalInputEncoding argument.

MATLAB Command

You clicked a link that corresponds to this MATLAB command:

Run the command by entering it in the MATLAB Command Window. Web browsers do not support MATLAB commands.

Website auswählen

Wählen Sie eine Website aus, um übersetzte Inhalte (sofern verfügbar) sowie lokale Veranstaltungen und Angebote anzuzeigen. Auf der Grundlage Ihres Standorts empfehlen wir Ihnen die folgende Auswahl: .

Sie können auch eine Website aus der folgenden Liste auswählen:

So erhalten Sie die bestmögliche Leistung auf der Website

Wählen Sie für die bestmögliche Website-Leistung die Website für China (auf Chinesisch oder Englisch). Andere landesspezifische Websites von MathWorks sind für Besuche von Ihrem Standort aus nicht optimiert.

Amerika

- América Latina (Español)

- Canada (English)

- United States (English)

Europa

- Belgium (English)

- Denmark (English)

- Deutschland (Deutsch)

- España (Español)

- Finland (English)

- France (Français)

- Ireland (English)

- Italia (Italiano)

- Luxembourg (English)

- Netherlands (English)

- Norway (English)

- Österreich (Deutsch)

- Portugal (English)

- Sweden (English)

- Switzerland

- United Kingdom (English)