voiceActivityDetector

Detect presence of speech in audio signal

Description

The voiceActivityDetector

System object™ detects the presence of speech in an audio segment. You can also use the

voiceActivityDetector

System object to output an estimate of the noise variance per frequency bin.

To detect the presence of speech:

Create the

voiceActivityDetectorobject and set its properties.Call the object with arguments, as if it were a function.

To learn more about how System objects work, see What Are System Objects?

Creation

Description

VAD = voiceActivityDetector creates a System object, VAD, that detects the presence of speech independently

across each input channel.

VAD = voiceActivityDetector( sets

each property Name,Value)Name to the specified Value.

Unspecified properties have default values.

Example: VAD = voiceActivityDetector('InputDomain','Frequency')

creates a System object, VAD, that accepts frequency-domain input.

Properties

Usage

Description

[

applies a voice activity detector on the input, probability,noiseEstimate]

= VAD(audioIn)audioIn, and returns

the probability that speech is present. It also returns the estimated noise variance per

frequency bin.

Input Arguments

Output Arguments

Object Functions

To use an object function, specify the

System object as the first input argument. For

example, to release system resources of a System object named obj, use

this syntax:

release(obj)

Examples

Algorithms

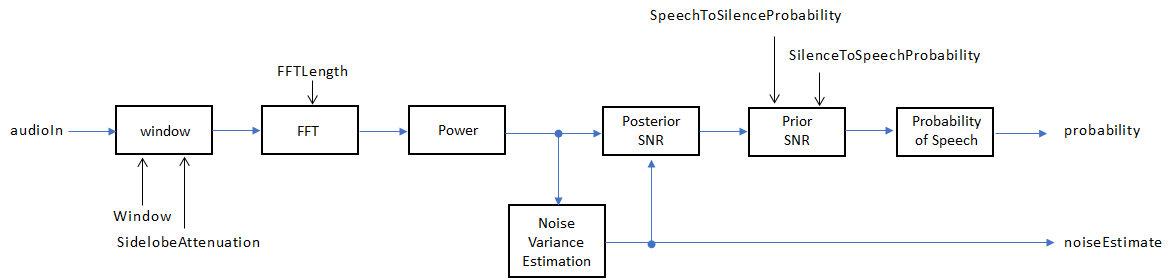

The voiceActivityDetector implements the algorithm described in [1].

If InputDomain is specified as 'Time', the input

signal is windowed and then converted to the frequency domain according to the

Window, SidelobeAttenuation, and

FFTLength properties. If InputDomain is specified

as frequency, the input is assumed to be a windowed discrete time Fourier transform (DTFT) of

an audio signal. The signal is then converted to the power domain. Noise variance is estimated

according to [2]. The posterior and prior

SNR are estimated according to the Minimum Mean-Square Error (MMSE) formula described in [3]. A log likelihood ratio

test and Hidden Markov Model (HMM)-based hang-over scheme determine the probability that the

current frame contains speech, according to [1].

References

[1] Sohn, Jongseo., Nam Soo Kim, and Wonyong Sung. "A Statistical Model-Based Voice Activity Detection." Signal Processing Letters IEEE. Vol. 6, No. 1, 1999.

[2] Martin, R. "Noise Power Spectral Density Estimation Based on Optimal Smoothing and Minimum Statistics." IEEE Transactions on Speech and Audio Processing. Vol. 9, No. 5, 2001, pp. 504–512.

[3] Ephraim, Y., and D. Malah. "Speech Enhancement Using a Minimum Mean-Square Error Short-Time Spectral Amplitude Estimator." IEEE Transactions on Acoustics, Speech, and Signal Processing. Vol. 32, No. 6, 1984, pp. 1109–1121.

Extended Capabilities

Version History

Introduced in R2018a

See Also

audioFeatureExtractor | mfcc | pitch | cepstralCoefficients | Voice Activity

Detector