kfoldLoss

Loss for cross-validated partitioned regression model

Description

L = kfoldLoss(CVMdl,Name,Value)

Examples

Find the cross-validation loss for a regression ensemble of the carsmall data.

Load the carsmall data set and select displacement, horsepower, and vehicle weight as predictors.

load carsmall

X = [Displacement Horsepower Weight];Train an ensemble of regression trees.

rens = fitrensemble(X,MPG);

Create a cross-validated ensemble from rens and find the k-fold cross-validation loss.

rng(10,'twister') % For reproducibility cvrens = crossval(rens); L = kfoldLoss(cvrens)

L = 28.7114

The mean squared error (MSE) is a measure of model quality. Examine the MSE for each fold of a cross-validated regression model.

Load the carsmall data set. Specify the predictor X and the response data Y.

load carsmall

X = [Cylinders Displacement Horsepower Weight];

Y = MPG;Train a cross-validated regression tree model. By default, the software implements 10-fold cross-validation.

rng('default') % For reproducibility CVMdl = fitrtree(X,Y,'CrossVal','on');

Compute the MSE for each fold. Visualize the distribution of the loss values by using a box plot. Notice that none of the values is an outlier.

losses = kfoldLoss(CVMdl,'Mode','individual')

losses = 10×1

42.5072

20.3995

22.3737

34.4255

40.8005

60.2755

19.5562

9.2060

29.0788

16.3386

boxchart(losses)

Compute the loss and the predictions for a classification model, first partitioned using holdout validation and then partitioned using 3-fold cross-validation. Compare the two sets of losses and predictions.

Create a table from the fisheriris data set, which contains length and width measurements from the sepals and petals of three species of iris flowers. View the first eight observations.

fisheriris = readtable("fisheriris.csv");

head(fisheriris) SepalLength SepalWidth PetalLength PetalWidth Species

___________ __________ ___________ __________ __________

5.1 3.5 1.4 0.2 {'setosa'}

4.9 3 1.4 0.2 {'setosa'}

4.7 3.2 1.3 0.2 {'setosa'}

4.6 3.1 1.5 0.2 {'setosa'}

5 3.6 1.4 0.2 {'setosa'}

5.4 3.9 1.7 0.4 {'setosa'}

4.6 3.4 1.4 0.3 {'setosa'}

5 3.4 1.5 0.2 {'setosa'}

Partition the data using cvpartition. First, create a partition for holdout validation, using approximately 70% of the observations for the training data and 30% for the validation data. Then, create a partition for 3-fold cross-validation.

rng(0,"twister") % For reproducibility holdoutPartition = cvpartition(fisheriris.Species,Holdout=0.30); kfoldPartition = cvpartition(fisheriris.Species,KFold=3);

holdoutPartition and kfoldPartition are both stratified random partitions. You can use the training and test functions to find the indices for the observations in the training and validation sets, respectively.

Train a classification tree model using the fisheriris data. Specify Species as the response variable.

Mdl = fitctree(fisheriris,"Species");Create the partitioned classification models using crossval.

holdoutMdl = crossval(Mdl,CVPartition=holdoutPartition)

holdoutMdl =

ClassificationPartitionedModel

CrossValidatedModel: 'Tree'

PredictorNames: {'SepalLength' 'SepalWidth' 'PetalLength' 'PetalWidth'}

ResponseName: 'Species'

NumObservations: 150

KFold: 1

Partition: [1×1 cvpartition]

ClassNames: {'setosa' 'versicolor' 'virginica'}

ScoreTransform: 'none'

Properties, Methods

kfoldMdl = crossval(Mdl,CVPartition=kfoldPartition)

kfoldMdl =

ClassificationPartitionedModel

CrossValidatedModel: 'Tree'

PredictorNames: {'SepalLength' 'SepalWidth' 'PetalLength' 'PetalWidth'}

ResponseName: 'Species'

NumObservations: 150

KFold: 3

Partition: [1×1 cvpartition]

ClassNames: {'setosa' 'versicolor' 'virginica'}

ScoreTransform: 'none'

Properties, Methods

holdoutMdl and kfoldMdl are ClassificationPartitionedModel objects.

Compute the minimal expected misclassification cost for holdoutMdl and kfoldMdl using kfoldLoss. Because both models use the default cost matrix, this cost is the same as the classification error.

holdoutL = kfoldLoss(holdoutMdl)

holdoutL = 0.0889

kfoldL = kfoldLoss(kfoldMdl)

kfoldL = 0.0600

holdoutL is the error computed using the predictions for one validation set, while kfoldL is an average error computed using the predictions for three folds of validation data. Cross-validation metrics tend to be better indicators of a model's performance on unseen data.

Compute the validation data predictions for the two models using kfoldPredict.

[holdoutLabels,holdoutScores] = kfoldPredict(holdoutMdl); [kfoldLabels,kfoldScores] = kfoldPredict(kfoldMdl); holdoutClassNames = holdoutMdl.ClassNames; holdoutScores = array2table(holdoutScores,VariableNames=holdoutClassNames); kfoldClassNames = kfoldMdl.ClassNames; kfoldScores = array2table(kfoldScores,VariableNames=kfoldClassNames); predictions = table(holdoutLabels,kfoldLabels, ... holdoutScores,kfoldScores, ... VariableNames=["holdoutMdl Labels","kfoldMdl Labels", ... "holdoutMdl Scores","kfoldMdl Scores"])

predictions=150×4 table

holdoutMdl Labels kfoldMdl Labels holdoutMdl Scores kfoldMdl Scores

_________________ _______________ _________________________________ _________________________________

setosa versicolor virginica setosa versicolor virginica

______ __________ _________ ______ __________ _________

{'setosa'} {'setosa'} NaN NaN NaN 1 0 0

{'setosa'} {'setosa'} 1 0 0 1 0 0

{'setosa'} {'setosa'} NaN NaN NaN 1 0 0

{'setosa'} {'setosa'} NaN NaN NaN 1 0 0

{'setosa'} {'setosa'} NaN NaN NaN 1 0 0

{'setosa'} {'setosa'} NaN NaN NaN 1 0 0

{'setosa'} {'setosa'} NaN NaN NaN 1 0 0

{'setosa'} {'setosa'} NaN NaN NaN 1 0 0

{'setosa'} {'setosa'} NaN NaN NaN 1 0 0

{'setosa'} {'setosa'} NaN NaN NaN 1 0 0

{'setosa'} {'setosa'} 1 0 0 1 0 0

{'setosa'} {'setosa'} NaN NaN NaN 1 0 0

{'setosa'} {'setosa'} NaN NaN NaN 1 0 0

{'setosa'} {'setosa'} 1 0 0 1 0 0

{'setosa'} {'setosa'} 1 0 0 1 0 0

{'setosa'} {'setosa'} NaN NaN NaN 1 0 0

⋮

kfoldPredict returns NaN scores for the observations used to train holdoutMdl.Trained. For these observations, the function selects the class label with the highest frequency as the predicted label. In this case, because all classes have the same frequency, the function selects the first class (setosa) as the predicted label. The function uses the trained model to return predictions for the validation set observations. kfoldPredict returns each kfoldMdl prediction using the model in kfoldMdl.Trained that was trained without that observation.

To predict responses for unseen data, use the model trained on the entire data set (Mdl) and its predict function rather than a partitioned model such as holdoutMdl or kfoldMdl.

Train a cross-validated generalized additive model (GAM) with 10 folds. Then, use kfoldLoss to compute the cumulative cross-validation regression loss (mean squared errors). Use the errors to determine the optimal number of trees per predictor (linear term for predictor) and the optimal number of trees per interaction term.

Alternatively, you can find optimal values of fitrgam name-value arguments by using the OptimizeHyperparameters name-value argument. For an example, see Optimize GAM Using OptimizeHyperparameters.

Load the patients data set.

load patientsCreate a table that contains the predictor variables (Age, Diastolic, Smoker, Weight, Gender, and SelfAssessedHealthStatus) and the response variable (Systolic).

tbl = table(Age,Diastolic,Smoker,Weight,Gender,SelfAssessedHealthStatus,Systolic);

Create a cross-validated GAM by using the default cross-validation option. Specify the 'CrossVal' name-value argument as 'on'. Also, specify to include 5 interaction terms.

rng('default') % For reproducibility CVMdl = fitrgam(tbl,'Systolic','CrossVal','on','Interactions',5);

If you specify 'Mode' as 'cumulative' for kfoldLoss, then the function returns cumulative errors, which are the average errors across all folds obtained using the same number of trees for each fold. Display the number of trees for each fold.

CVMdl.NumTrainedPerFold

ans = struct with fields:

PredictorTrees: [300 300 300 300 300 300 300 300 300 300]

InteractionTrees: [76 100 100 100 100 42 100 100 59 100]

kfoldLoss can compute cumulative errors using up to 300 predictor trees and 42 interaction trees.

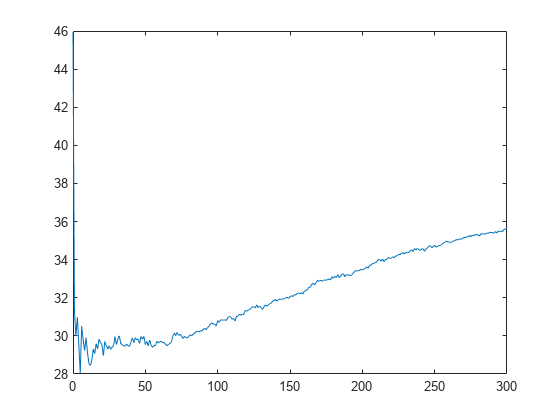

Plot the cumulative, 10-fold cross-validated, mean squared errors. Specify 'IncludeInteractions' as false to exclude interaction terms from the computation.

L_noInteractions = kfoldLoss(CVMdl,'Mode','cumulative','IncludeInteractions',false); figure plot(0:min(CVMdl.NumTrainedPerFold.PredictorTrees),L_noInteractions)

The first element of L_noInteractions is the average error over all folds obtained using only the intercept (constant) term. The (J+1)th element of L_noInteractions is the average error obtained using the intercept term and the first J predictor trees per linear term. Plotting the cumulative loss allows you to monitor how the error changes as the number of predictor trees in the GAM increases.

Find the minimum error and the number of predictor trees used to achieve the minimum error.

[M,I] = min(L_noInteractions)

M = 28.0506

I = 6

The GAM achieves the minimum error when it includes 5 predictor trees.

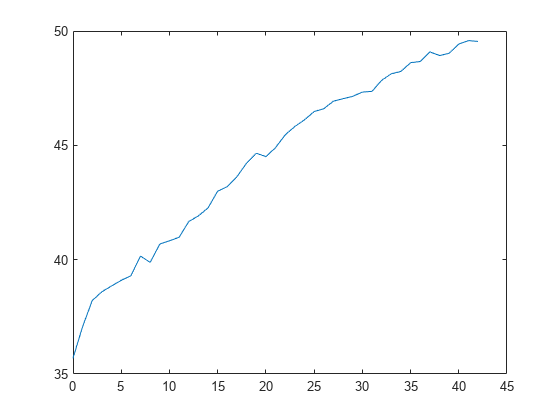

Compute the cumulative mean squared error using both linear terms and interaction terms.

L = kfoldLoss(CVMdl,'Mode','cumulative'); figure plot(0:min(CVMdl.NumTrainedPerFold.InteractionTrees),L)

The first element of L is the average error over all folds obtained using the intercept (constant) term and all predictor trees per linear term. The (J+1)th element of L is the average error obtained using the intercept term, all predictor trees per linear term, and the first J interaction trees per interaction term. The plot shows that the error increases when interaction terms are added.

If you are satisfied with the error when the number of predictor trees is 5, you can create a predictive model by training the univariate GAM again and specifying 'NumTreesPerPredictor',5 without cross-validation.

Input Arguments

Cross-validated partitioned regression model, specified as a RegressionPartitionedModel, RegressionPartitionedEnsemble, RegressionPartitionedGAM, RegressionPartitionedGP, RegressionPartitionedNeuralNetwork, or RegressionPartitionedSVM object. You can create the object in two ways:

Pass a trained regression model listed in the following table to its

crossvalobject function.Train a regression model using a function listed in the following table and specify one of the cross-validation name-value arguments for the function.

| Regression Model | Function |

|---|---|

RegressionEnsemble | fitrensemble |

RegressionGAM | fitrgam |

RegressionGP | fitrgp |

RegressionNeuralNetwork | fitrnet |

RegressionSVM | fitrsvm |

RegressionTree | fitrtree |

Name-Value Arguments

Specify optional pairs of arguments as

Name1=Value1,...,NameN=ValueN, where Name is

the argument name and Value is the corresponding value.

Name-value arguments must appear after other arguments, but the order of the

pairs does not matter.

Before R2021a, use commas to separate each name and value, and enclose

Name in quotes.

Example: kfoldLoss(CVMdl,'Folds',[1 2 3 5]) specifies to use the

first, second, third, and fifth folds to compute the mean squared error, but to exclude the

fourth fold.

Fold indices to use, specified as a positive integer vector. The elements of Folds must be within the range from 1 to CVMdl.KFold.

The software uses only the folds specified in Folds.

Example: 'Folds',[1 4 10]

Data Types: single | double

Flag to include interaction terms of the model, specified as true or

false. This argument is valid only for a generalized

additive model (GAM). That is, you can specify this argument only when

CVMdl is RegressionPartitionedGAM.

The default value is true if the models in

CVMdl (CVMdl.Trained) contain

interaction terms. The value must be false if the models do not

contain interaction terms.

Example: 'IncludeInteractions',false

Data Types: logical

Loss function, specified as 'mse' or a function handle.

Specify the built-in function

'mse'. In this case, the loss function is the mean squared error.Specify your own function using function handle notation.

Assume that n is the number of observations in the training data (

CVMdl.NumObservations). Your function must have the signaturelossvalue =, where:lossfun(Y,Yfit,W)The output argument

lossvalueis a scalar.You specify the function name (

lossfun).Yis an n-by-1 numeric vector of observed responses.Yfitis an n-by-1 numeric vector of predicted responses.Wis an n-by-1 numeric vector of observation weights.

Specify your function using

'LossFun',@.lossfun

Data Types: char | string | function_handle

Aggregation level for the output, specified as 'average',

'individual', or 'cumulative'.

| Value | Description |

|---|---|

'average' | The output is a scalar average over all folds. |

'individual' | The output is a vector of length k containing one value per fold, where k is the number of folds. |

'cumulative' | Note If you want to specify this value,

|

Example: 'Mode','individual'

Since R2023b

Predicted response value to use for observations with missing predictor values,

specified as "median", "mean",

"omitted", or a numeric scalar. This argument is valid only for a

Gaussian process regression, neural network, or support vector machine model. That is,

you can specify this argument only when CVMdl is a

RegressionPartitionedGP,

RegressionPartitionedNeuralNetwork, or

RegressionPartitionedSVM object.

| Value | Description |

|---|---|

"median" |

This value is

the default when |

"mean" | kfoldLoss uses the mean of the observed response

values in the training-fold data as the predicted response value for

observations with missing predictor values. |

"omitted" | kfoldLoss excludes observations with missing

predictor values from the loss computation. |

| Numeric scalar | kfoldLoss uses this value as the predicted

response value for observations with missing predictor values. |

If an observation is missing an observed response value or an observation weight,

then kfoldLoss does not use the observation in the loss

computation.

Example: "PredictionForMissingValue","omitted"

Data Types: single | double | char | string

Output Arguments

Loss, returned as a numeric scalar or numeric column vector.

By default, the loss is the mean squared error between the validation-fold observations and the predictions made with a regression model trained on the training-fold observations.

If

Modeis'average', thenLis the average loss over all folds.If

Modeis'individual', thenLis a k-by-1 numeric column vector containing the loss for each fold, where k is the number of folds.If

Modeis'cumulative'andCVMdlisRegressionPartitionedEnsemble, thenLis amin(CVMdl.NumTrainedPerFold)-by-1 numeric column vector. Each elementjis the average loss over all folds that the function obtains using ensembles trained with weak learners1:j.If

Modeis'cumulative'andCVMdlisRegressionPartitionedGAM, then the output value depends on theIncludeInteractionsvalue.If

IncludeInteractionsisfalse, thenLis a(1 + min(NumTrainedPerFold.PredictorTrees))-by-1 numeric column vector. The first element ofLis the average loss over all folds that is obtained using only the intercept (constant) term. The(j + 1)th element ofLis the average loss obtained using the intercept term and the firstjpredictor trees per linear term.If

IncludeInteractionsistrue, thenLis a(1 + min(NumTrainedPerFold.InteractionTrees))-by-1 numeric column vector. The first element ofLis the average loss over all folds that is obtained using the intercept (constant) term and all predictor trees per linear term. The(j + 1)th element ofLis the average loss obtained using the intercept term, all predictor trees per linear term, and the firstjinteraction trees per interaction term.

Alternative Functionality

If you want to compute the cross-validated loss of a tree model, you can avoid

constructing a RegressionPartitionedModel object by calling cvloss. Creating a cross-validated tree object can save you time if you plan to

examine it more than once.

Extended Capabilities

Usage notes and limitations:

This function fully supports GPU arrays for the following models.

RegressionPartitionedModelobject fitted usingfitrtree, or by passing aRegressionTreeobject tocrossval

For more information, see Run MATLAB Functions on a GPU (Parallel Computing Toolbox).

Version History

Introduced in R2011akfoldLoss fully supports GPU arrays for RegressionPartitionedGP models.

kfoldLoss fully supports GPU arrays for RegressionPartitionedNeuralNetwork models.

Starting in R2023b, when you predict or compute the loss, some regression models allow you to specify the predicted response value for observations with missing predictor values. Specify the PredictionForMissingValue name-value argument to use a numeric scalar, the training set median, or the training set mean as the predicted value. When computing the loss, you can also specify to omit observations with missing predictor values.

This table lists the object functions that support the

PredictionForMissingValue name-value argument. By default, the

functions use the training set median as the predicted response value for observations with

missing predictor values.

| Model Type | Model Objects | Object Functions |

|---|---|---|

| Gaussian process regression (GPR) model | RegressionGP, CompactRegressionGP | loss, predict, resubLoss, resubPredict |

RegressionPartitionedGP | kfoldLoss, kfoldPredict | |

| Gaussian kernel regression model | RegressionKernel | loss, predict |

RegressionPartitionedKernel | kfoldLoss, kfoldPredict | |

| Linear regression model | RegressionLinear | loss, predict |

RegressionPartitionedLinear | kfoldLoss, kfoldPredict | |

| Neural network regression model | RegressionNeuralNetwork, CompactRegressionNeuralNetwork | loss, predict, resubLoss, resubPredict |

RegressionPartitionedNeuralNetwork | kfoldLoss, kfoldPredict | |

| Support vector machine (SVM) regression model | RegressionSVM, CompactRegressionSVM | loss, predict, resubLoss, resubPredict |

RegressionPartitionedSVM | kfoldLoss, kfoldPredict |

In previous releases, the regression model loss and predict functions listed above used NaN predicted response values for observations with missing predictor values. The software omitted observations with missing predictor values from the resubstitution ("resub") and cross-validation ("kfold") computations for prediction and loss.

Starting in R2023a, kfoldLoss fully supports GPU arrays for RegressionPartitionedSVM models.

MATLAB Command

You clicked a link that corresponds to this MATLAB command:

Run the command by entering it in the MATLAB Command Window. Web browsers do not support MATLAB commands.

Website auswählen

Wählen Sie eine Website aus, um übersetzte Inhalte (sofern verfügbar) sowie lokale Veranstaltungen und Angebote anzuzeigen. Auf der Grundlage Ihres Standorts empfehlen wir Ihnen die folgende Auswahl: .

Sie können auch eine Website aus der folgenden Liste auswählen:

So erhalten Sie die bestmögliche Leistung auf der Website

Wählen Sie für die bestmögliche Website-Leistung die Website für China (auf Chinesisch oder Englisch). Andere landesspezifische Websites von MathWorks sind für Besuche von Ihrem Standort aus nicht optimiert.

Amerika

- América Latina (Español)

- Canada (English)

- United States (English)

Europa

- Belgium (English)

- Denmark (English)

- Deutschland (Deutsch)

- España (Español)

- Finland (English)

- France (Français)

- Ireland (English)

- Italia (Italiano)

- Luxembourg (English)

- Netherlands (English)

- Norway (English)

- Österreich (Deutsch)

- Portugal (English)

- Sweden (English)

- Switzerland

- United Kingdom (English)