Implementation of a PSO algorithm with the same syntax as the Genetic Algorithm Toolbox.

Sie verfolgen jetzt diese Einreichung

- Aktualisierungen können Sie in Ihrem Feed verfolgter Inhalte sehen.

- Je nach Ihren Kommunikationseinstellungen können Sie auch E-Mails erhalten.

Previously titled "Another Particle Swarm Toolbox"

Introduction

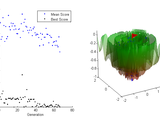

Particle swarm optimization (PSO) is a derivative-free global optimum solver. It is inspired by the surprisingly organized behaviour of large groups of simple animals, such as flocks of birds, schools of fish, or swarms of locusts. The individual creatures, or "particles", in this algorithm are primitive, knowing only four simple things: 1 & 2) their own current location in the search space and fitness value, 3) their previous personal best location, and 4) the overall best location found by all the particles in the "swarm". There are no gradients or Hessians to calculate. Each particle continually adjusts its speed and trajectory in the search space based on this information, moving closer towards the global optimum with each iteration. As seen in nature, this computational swarm displays a remarkable level of coherence and coordination despite the simplicity of its individual particles.

Ease of Use

If you are already using the Genetic Algorithm (GA) included with MATLAB's Global Optimization Toolbox, then this PSO toolbox will save you a great deal of time. It can be called from the MATLAB command line using the same syntax as the GA, with some additional options specific to PSO. This will allow a high degree of code re-usability between the PSO toolbox and the GA toolbox. Certain GA-specific parameters such as cross-over and mutation functions will obviously not be applicable to the PSO algorithm. However, many of the commonly used options for the Genetic Algorithm Toolbox may be used interchangeably with PSO since they are both iterative population-based solvers. See >> help pso (from the ./psopt directory) for more details.

Features

* Support for distributed computing using MATLAB's parallel computing toolbox.

* Full support for bounded, linear, and nonlinear constraints.

* Modular and customizable.

* Binary optimization. See PSOBINARY function for details.

* Vectorized fitness functions.

* Solver parameters controlled using 'options' structure similar to existing MATLAB optimization solvers.

* User-defined custom plots may be written using same template as GA plotting functions.

* Another optimization solver may be called as a "hybrid function" to refine PSO results.

A demo function is included, with a small library of test functions. To run the demo, from the psopt directory, call >> psodemo with no inputs or outputs.

Bug reports and feature requests are welcome.

Special thanks to the following people for contributing code and bug fixes:

* Ben Xin Kang of the University of Hong Kong

* Christian Hansen of the University of Hannover

* Erik Schreurs from the MATLAB Central community

* J. Oliver of Brigham Young University

* Michael Johnston of the IRIS toolbox

* Ziqiang (Kevin) Chen

Bibliography

* J Kennedy, RC Eberhart, YH Shi. Swarm Intelligence. Academic Press, 2001.

* Particle Swarm Optimization. http://en.wikipedia.org/wiki/Particle_swarm_optimization

* RE Perez, K Behdinan. Particle swarm approach for structural design optimization. Computers and Structures 85 (2007) 1579–1588.

* SM Mikki, AA Kishk. Particle Swarm Optimization: A Physics-Based Approach. Morgan & Claypool, 2008.

Addendum A

Nonlinear inequality constraints in the form c(x) ≤ 0 and nonlinear equality constraints of the form ceq(x) = 0 have now been fully implemented. The 'penalize' constraint boundary enforcement method is now default. It has been redesigned and tested extensively, and should work with all types of constraints.

See the following document for the proper syntax for defining nonlinear constraint functions: http://www.mathworks.com/help/optim/ug/writing-constraints.html#brhkghv-16.

To see a demonstration of nonlinear inequality constraints using a quadrifolium overlaid on Rosenbrock's function, run PSODEMO and choose 'nonlinearconstrdemo' as the test function.

Addendum B

See the following guide in the GA toolbox documentation to get started on using the parallel computing toolbox.

http://www.mathworks.com/help/gads/genetic-algorithm-options.html#f17234

Addendum C

If you are just starting out and hoping to learn to use this toolbox for work or school, here are some essential readings:

* MATLAB's Optimization Toolbox: http://www.mathworks.com/help/optim/index.html

* MATLAB's Global Optimization Toolbox: http://www.mathworks.com/help/gads/index.html

* MATLAB's Genetic Algorithm: http://www.mathworks.com/help/gads/genetic-algorithm.html

Addendum D

There is now a particle swarm optimizer included with the Global Optimization Toolbox. If you have a recent version of the Global Optimization Toolbox installed, you will need to set the path appropriately in your code to use this toolbox.

Zitieren als

S Chen. Constrained Particle Swarm Optimization (2009-2018). MATLAB File Exchange. https://www.mathworks.com/matlabcentral/fileexchange/25986.

Quellenangaben

Inspiriert von: Particle Swarm Optimization Toolbox, Test functions for global optimization algorithms

Inspiriert: benpesen/optFUMOLA, Co-Blade: Software for Analysis and Design of Composite Blades

Allgemeine Informationen

- Version 1.31.4 (40,6 KB)

-

Lizenz auf GitHub anzeigen

Kompatibilität der MATLAB-Version

- Kompatibel mit allen Versionen

Plattform-Kompatibilität

- Windows

- macOS

- Linux

Versionen, die den GitHub-Standardzweig verwenden, können nicht heruntergeladen werden

| Version | Veröffentlicht | Versionshinweise | Action |

|---|---|---|---|

| 1.31.4 | Fix error involving checking for fmincon when defined as a hybrid function. In earlier versions of MATLAB it was an *.m file, and has subsequently been changed to a *.p file. Thanks to Martin Hallmann for pointing this out. |

||

| 1.31.3 | Fixes error related to generating initial population with constraints. |

||

| 1.31.2.0 | Updated documentation.

|

||

| 1.31.1.0 | Added link to github repository. |

||

| 1.31.0.0 | Fixed a typo which caused improper handling of bounded constraints in determining initial particle distribution. Thanks to Erik for pointing out this major bug! |

||

| 1.30.0.0 | Minor bug fix and efficiency improvements, related to nonlinear constraint checking code as well as parallel computing capabilities. |

||

| 1.29.0.0 | Fixed bug involving verbosity checks before displaying warnings. |

||

| 1.27.0.0 | Implemented parallel computing capability. A few minor improvements. See the included release notes file for details. |

||

| 1.26.0.0 | Fixed namespace problem with one of the *.m files. |

||

| 1.25.0.0 | Merged previously lost updates from version 20100818. Fixed bugs related to nonlinear constraint handling. See the release notes file included with toolbox for details. |

||

| 1.22.0.0 | Updated description. |

||

| 1.20.0.0 | The previous upload was a zip bomb. Rearranged the contents of the *.zip file to behave nicely when unpacked. |

||

| 1.18.0.0 | Implemented an alternative, penalty-based method of constraint enforcement as described in Perez and Behdinan's 2007 paper. See description for details. |

||

| 1.14.0.0 | Minor bug fix. Thanks to Ben for pointing this out. |

||

| 1.13.0.0 | A time limit can now be set, and a custom swarm acceleration function can be defined using the 'AccelerationFcn' option in PSOOPTIMSET (default is PSOITERATE). See release notes for more details. |

||

| 1.12.0.0 | Added support for problems with binary variables. Minor bug fixes. See release notes for details. |

||

| 1.11.0.0 | Output to command window can now be suppressed using the options structure. See release notes for details. |

||

| 1.10.0.0 | Robustness improvements, minor bug fixes. |

||

| 1.9.0.0 | Updated description. Minor performance and robustness improvements. |

||

| 1.8.0.0 | New features: nonlinear equality constraints; ability to define initial swarm state. |

||

| 1.7.0.0 | Nonlinear inequality constraints can now be used, with 'soft' boundaries only; psodemo now has a 'fast' setting requiring less intensive 3d graphics. See release notes for more details. |

||

| 1.6.0.0 | Various bug fixes. Implemented 'absorb' style of boundaries for linear constraints. Social and cognitive attraction parameters can now be adjusted through the options structure. See release notes for details. |

||

| 1.5.0.0 | Bug fix, minor visual improvements. See release notes for details. |

||

| 1.4.0.0 | Major bug fix. New features, including ability to call a hybrid function to further refine the final result of the swarm algorithm. See release notes for complete details. |

||

| 1.3.0.0 | Minor bug fixes, more detailed description. Forgot to update the zip file last time. |

||

| 1.2.0.0 | Small bug fix, more detailed description. |

||

| 1.0.0.0 |