Work with HDF5 Virtual Datasets (VDS)

Overview

The HDF5 Virtual Dataset (VDS) feature allows you to access data from a collection of HDF5 files as a single, unified dataset, without modifying how the data is stored in the original files. Virtual datasets can have unlimited dimensions and map to source datasets with unlimited dimensions. This mapping allows a Virtual Dataset to grow over time, as its underlying source datasets change in size.

The VDS feature was introduced in the HDF5 library version 1.10. To use VDS, you must be familiar with the HDF5 VDS programming model. For more information, see the HDF5 Virtual Dataset documentation on The HDF Group website.

Note

MATLAB® versions earlier than R2021b cannot read HDF5 Virtual Datasets.

Create a Virtual Dataset

Follow these general steps for building a Virtual Dataset:

Create datasets that comprise the VDS (the source datasets) (optional).

Create the VDS.

Define a datatype.

Define a dataspace.

Define the dataset creation property list.

Map elements from the source datasets to the elements of the VDS.

Iterate over the source datasets.

Select elements in the source dataset (source selection).

Select elements in the Virtual Dataset (destination selection).

Map destination selections to source selections using a dataset creation property list call.

End the iteration.

Call

H5D.createusing the defined properties.

Access the VDS as a regular HDF5 dataset.

Close the VDS when finished.

Note

The HDF5 C library uses C-style ordering for multidimensional arrays, whereas MATLAB uses FORTRAN-style ordering. For more information, see Report Data Set Dimensions.

Work with Remotely Stored Virtual Datasets

You can use the MATLAB low-level HDF5 functions to create and read Virtual Datasets stored in remote locations, such as Amazon S3™ and Windows Azure® Blob Service. Use the high-level HDF5 functions to read and access information on Virtual Datasets stored in remote locations.

When accessing a Virtual Dataset stored in a remote location, you must specify the full path using a uniform resource locator (URL). For example, display the metadata of an HDF5 file stored on Amazon S3.

h5disp('s3://bucketname/path_to_file/my_VDSdata.h5');

For more information on setting up MATLAB to access your online storage service, see Work with Remote Data.

Create Virtual Dataset from Datasets of Varying Sizes

Create an HDF5 Virtual Dataset from datasets of varying sizes and with mismatching group and dataset names. The elevation data in the three datasets is generated from the MATLAB peaks function.

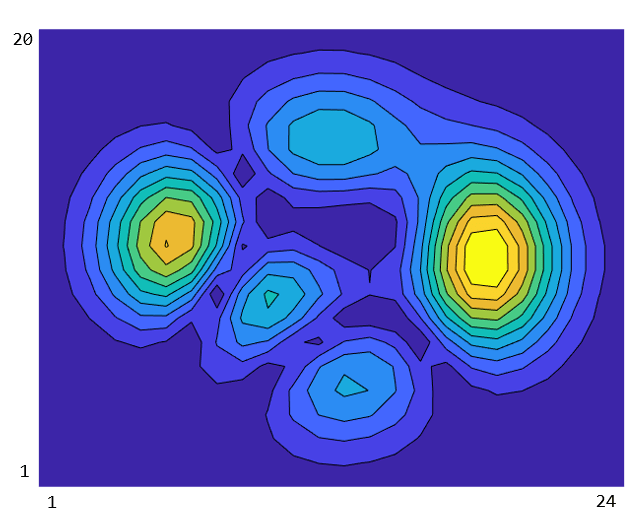

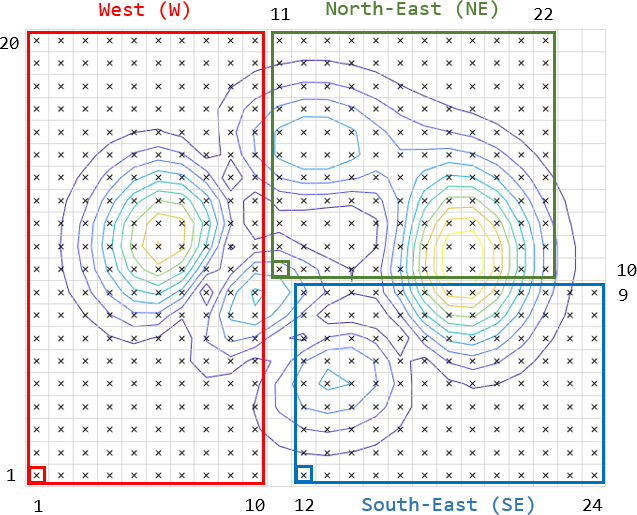

Use three datasets containing elevation data to reconstruct a larger, more complete topographical map. The three datasets are disjoint, meaning spatial data is missing along one or more of their shared dimensions, and they vary in shape and size.

Reconstruct the topographical profile with the three disjoint datasets. The sampling points from the three datasets are overlayed on the topographical profile according to their geospatial coordinates. In both plots, the x and y axes represent longitudes and latitudes, respectively, with latitudes increasing in the y-direction.

Display information on the three datasets in the files data_W.h5, data_NE.h5, data_SE.h5. The h5disp function displays information about the datasets, including their names, datatypes, dimensions, and groups to which they belong.

h5disp("data_W.h5")HDF5 data_W.h5

Group '/'

Group '/data'

Dataset 'elevation'

Size: 20x10

MaxSize: 20x10

Datatype: H5T_IEEE_F64LE (double)

ChunkSize: []

Filters: none

FillValue: 0.000000

h5disp("data_NE.h5")HDF5 data_NE.h5

Group '/'

Dataset 'z'

Size: 11x12

MaxSize: 11x12

Datatype: H5T_IEEE_F64LE (double)

ChunkSize: []

Filters: none

FillValue: 0.000000

h5disp("data_SE.h5")HDF5 data_SE.h5

Group '/'

Dataset 'Elev'

Size: 9x13

MaxSize: 9x13

Datatype: H5T_IEEE_F64LE (double)

ChunkSize: []

Filters: none

FillValue: 0.000000

Create a dataspace and creation property list for the Virtual Dataset. Then, map each dataset to the virtual dataspace using C-style dimensions ordering and zero-based indexing.

For instance, the lower-left corner of the data block for the North-East region corresponds to MATLAB indices [10 11]. To convert these indices to C-style ordered, zero-based indices, flip the indices [10 11] and subtract the value 1. The resulting start indices are [10 9].

% Define the fill value and specify the file access property list identifier. fillValue = NaN; faplID = H5P.create('H5P_FILE_ACCESS'); % Create the file for the Virtual Dataset. vdsFileID = H5F.create('data_VDS.h5','H5F_ACC_TRUNC','H5P_DEFAULT',faplID); % Define the datatype and dataspace. datatypeID = H5T.copy('H5T_NATIVE_DOUBLE'); vdsDataspaceID = H5S.create_simple(2,[24 20],[]); % Initialize Virtual Dataset creation property list with virtual layout and fill value. vdsDcplID = H5P.create('H5P_DATASET_CREATE'); H5P.set_layout(vdsDcplID,'H5D_VIRTUAL'); H5P.set_fill_value(vdsDcplID,datatypeID,fillValue); % Perform full dataset mapping for three individual source datasets. % Map '/data/elevation' from data_W.h5 to the Virtual Dataset. srcDataspaceID_W = H5S.create_simple(2,[10 20],[]); H5S.select_hyperslab(vdsDataspaceID,'H5S_SELECT_SET',[0 0],[],[],[10 20]); H5P.set_virtual(vdsDcplID, vdsDataspaceID,'data_W.h5','/data/elevation',srcDataspaceID_W); % Map '/z' from data_NE.h5 to the Virtual Dataset. srcDataspaceID_NE = H5S.create_simple(2,[12 11],[]); H5S.select_hyperslab(vdsDataspaceID,'H5S_SELECT_SET',[10 9],[],[],[12 11]); H5P.set_virtual(vdsDcplID,vdsDataspaceID,'data_NE.h5','/z',srcDataspaceID_NE); % Map '/Elev' from data_SE.h5 to the Virtual Dataset. srcDataspaceID_SE = H5S.create_simple(2,[13 9],[]); H5S.select_hyperslab(vdsDataspaceID,'H5S_SELECT_SET',[11 0],[],[],[13 9]); H5P.set_virtual(vdsDcplID,vdsDataspaceID,'data_SE.h5','/Elev',srcDataspaceID_SE);

Create the HDF5 file and the Virtual Dataset /elevation, and then close all open resources.

% Call H5D.create using the defined properties. vdsDatasetID = H5D.create(vdsFileID,'/elevation',datatypeID,vdsDataspaceID, ... 'H5P_DEFAULT',vdsDcplID,'H5P_DEFAULT'); % Close open resources. H5D.close(vdsDatasetID); H5S.close(srcDataspaceID_SE); H5S.close(srcDataspaceID_NE); H5S.close(srcDataspaceID_W); H5P.close(vdsDcplID); H5S.close(vdsDataspaceID); H5T.close(datatypeID); H5F.close(vdsFileID); H5P.close(faplID);

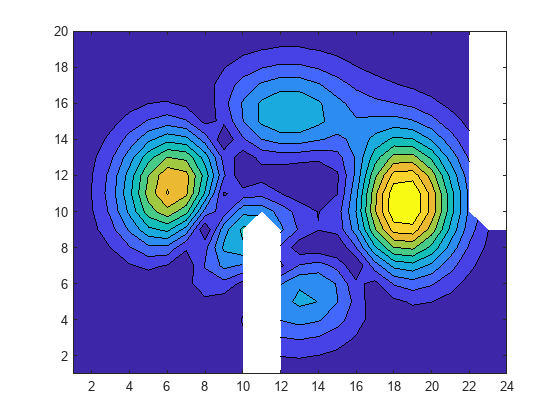

Read the Virtual Dataset and plot it.

The resulting plot is similar to the original plot of the topographical map, except for the areas where data is missing due to the disjoint datasets.

% Read elevations using the low-level interface. vdsFileID = H5F.open('data_VDS.h5','H5F_ACC_RDONLY','H5P_DEFAULT'); vdsDatasetID = H5D.open(vdsFileID,'/elevation','H5P_DEFAULT'); elevation = H5D.read(vdsDatasetID,'H5ML_DEFAULT','H5S_ALL','H5S_ALL','H5P_DEFAULT'); H5D.close(vdsDatasetID); H5F.close(vdsFileID); contourf(elevation,10);

Display the contents of the Virtual Dataset file.

h5disp('data_VDS.h5')HDF5 data_VDS.h5

Group '/'

Dataset 'elevation'

Size: 20x24

MaxSize: 20x24

Datatype: H5T_IEEE_F64LE (double)

ChunkSize: []

Filters: none

FillValue: NaN

You can perform I/O operations on the Virtual Dataset. For instance, read a hyperslab of data from the /elevation dataset using the h5read function in the high-level interface.

sample = h5read('data_VDS.h5','/elevation',[1 1],[5 6],[4 4])

sample = 5×6

0.0007 0.0102 0.0823 0.3265 0.0765 0.0059

0.0028 0.0331 0.3023 2.9849 0.1901 0.4741

0.1661 3.3570 2.5482 1.3171 4.8474 3.3676

0.1915 3.9606 1.1585 0.9892 3.9113 2.5722

0.0151 0.2633 1.0373 2.5380 0.9625 0.2401

See Also

Read and Write Data Concurrently Using Single-Writer/Multiple-Reader (SWMR) | Property (H5P) | Dataspace (H5S)